Google rolled out a redesigned Stitch workspace that accepts text, code, PRDs, and images on a spatial canvas, then generates prototypes and portable DESIGN.md files. Teams testing AI-native UI workflows can use it to try a tighter design-to-code loop in the live product.

The update turns Stitch from a prompt-to-UI generator into a canvas-based design environment. TestingCatalog's announcement clip lists five new pieces: "AI-Native Canvas," "Smarter Design Agent," "Instant Prototypes," "Design Systems and DESIGN.md," and "Voice mode."

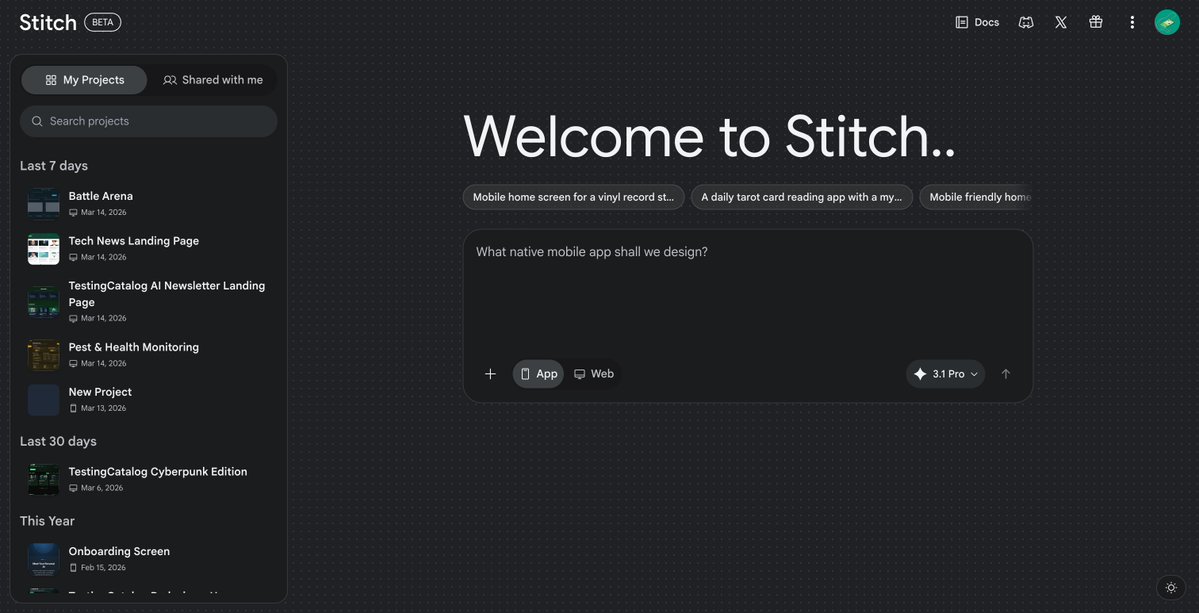

The live product already reflects that shift. TestingCatalog's beta screenshot shows a new "Stitch BETA" home screen with app and web project starters, project history, and a "3.1 Pro" indicator, while its earlier rollout post says the new experience is "already rolling out to users" and available for testing.

Voice mode is not just dictation. In the demo post, the flow is "start with a single prompt," then "enable the voice mode," explain the app, and let "the agent take care of it" while updating the canvas. That suggests Stitch is now operating more like an interactive design agent than a one-shot generator.

The deeper technical claim is that the agent has full project context. According to the thread breakdown, Stitch can mix mobile and desktop screens in one workspace, swap assets across multiple screens, infer a brief from the UI under construction, and create interactive prototypes with a Play action. The same post says DESIGN.md is intended to anchor a unified design system and help teams export tokens or import existing brand guidance, including from a live URL.

For engineering teams, the practical value is the tighter handoff between requirements, interface generation, and prototype output. The Rundown AI's recap says Stitch can turn static screens into interactive prototypes in seconds and auto-generate a "logical next screen," which matters for quickly validating flows before code is written.

The rollout still looks early. TestingCatalog's initial post said voice mode was "not available yet" in the first wave, but the later full announcement and hands-on demo show voice as part of the updated product. That points to a staged release rather than a single global flip, with the public entry point available through the Stitch site.

Full Stitch announcement 👀 - AI-Native Canvas - Smarter Design Agent - Instant Prototypes - Design Systems and DESIGN.md - Voice mode! 🔥 Stitching...

BREAKING 🚨: GOOGLE RELEASED A NEW VIBE DESIGN EXPERIENCE FOR STITCH WITH A NEW CANVAS EXPERIENCE! This new experience is already rolling out to users and is available for testing. A voice mode is not available yet, but it will likely arrive soon. Everyone is a designer now 👀

Google has just updated Stitch You can now vibe design full web and mobile apps just with your voice 🔥 1. Start with a single prompt 2. Enable the voice mode 3. Explain what you want 4. The agent takes care of it And the whole canvas is AI native!

Google is expanding its "Personal Intelligence" features across the U.S., integrating deeply personalized, context-aware AI into AI Mode in Search, the Gemini app, and Gemini in Chrome. By securely connecting the dots across your Google ecosystem such as Gmail and Google Photos Show more

Personal Intelligence is rolling out to more users for free across the Gemini app and Gemini in @GoogleChrome in the U.S. Access smarter responses uniquely relevant to you if you choose to connect your @Google apps like Search, @Gmail, @GooglePhotos, and @YouTube.🧵

BREAKING 🚨: GOOGLE RELEASED A NEW VIBE DESIGN EXPERIENCE FOR STITCH WITH A NEW CANVAS EXPERIENCE! This new experience is already rolling out to users and is available for testing. A voice mode is not available yet, but it will likely arrive soon. Everyone is a designer now 👀

Google plans to roll out a new "Vibe Design" experience on Stitch this Wednesday. Voice mode with selectable voices, a new canvas layout, a new "Hatter" agent, and a lot more are coming. Stitching... 👀