Miasma is a Rust web server that serves toxic content and recursive links to malicious scrapers instead of normal pages. The discussion quickly turned to whether hidden-link traps work against browser-based crawlers or mainly trigger another blacklist and anti-bot arms race, so operators should test crawler behavior before adopting it.

Posted by LucidLynx

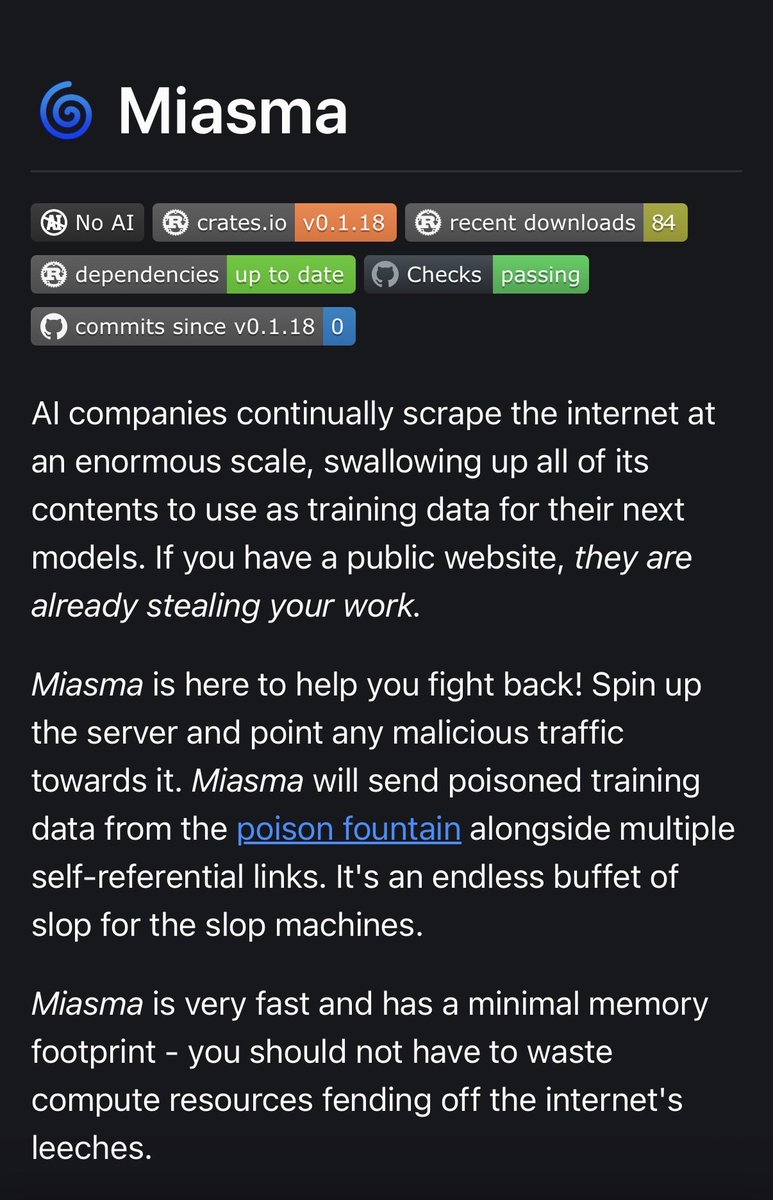

Miasma is a Rust-based web server designed to poison AI training data by serving endless toxic content and self-referential links to malicious scrapers. It features low resource usage, installation via Cargo, and setup via reverse proxies like Nginx with hidden links on websites. Repo stats: 486 stars, created March 2026, latest release v0.1.18.

Miasma’s launch is straightforward: it is a Rust server built to return poisoned training data and recursive links to suspected scrapers, while keeping a "minimal memory footprint," according to the project page. The setup described in the same source is operationally familiar rather than model-specific: install with Cargo, put it behind a reverse proxy, and divert traffic you judge to be malicious.

Posted by LucidLynx

Thread discussion highlights: - Lockal on hidden-link trap weakness: "Dumb curl-based LLM won't visit display:none links. Smarter browser-based navigators won't even render this link." - bobosola on effectiveness debate: "If you are automating it, I don't see why not... that's a guerilla tactic... it's pretty effective." - ninjagoo on is this solving the right problem: "Is this a solution in search of a problem?"

The uncertainty is in detection and crawler behavior, not in the server itself. In the Hacker News discussion, Lockal argued that "dumb curl-based LLM" scrapers will not follow display:none links, while "smarter browser-based navigators" may not render them either, which cuts directly against the hidden-link trap design. Other commenters pulled the debate in opposite directions: one called it a "guerilla tactic" that could still be "pretty effective," while another asked if it is "a solution in search of a problem," and warned that poisoning pages could look like "machine-generated spam" and feed an arms race around lists and blocking rules, as summarized in the main thread.

Posted by LucidLynx

Relevant as an adversarial web-infra pattern: the thread is about whether trap pages, hidden links, and poison content can meaningfully deter or mislead AI scrapers, and what more robust alternatives exist (header-based bot blocking, proxy plugins, provenance, licensed datasets).