Ollama 0.18.1 added OpenClaw web search and fetch plugins plus non-interactive launch flows for CI, scripts, and container jobs. Pair it with Pi and Nemotron 3 Nano 4B if you want unattended agent jobs on constrained hardware.

ollama launch flow, positioning it for container jobs, CI pipelines, and scripted eval or review tasks instead of an interactive setup wizard. headless modeollama run, and pitched it with Pi as "a great fit" for agents on constrained hardware. model availabilityOllama 0.18.1's main engineering change is that OpenClaw now gets first-party web search and web fetch plugins. In Ollama's release post, the company says models running through Ollama, whether local or cloud, can "search the web for the latest content and news," and OpenClaw can fetch a page and "extract readable content for processing." The important implementation boundary is also in that same post: the fetch path does not run JavaScript, so this is retrieval for static or server-rendered content, not full browser automation.

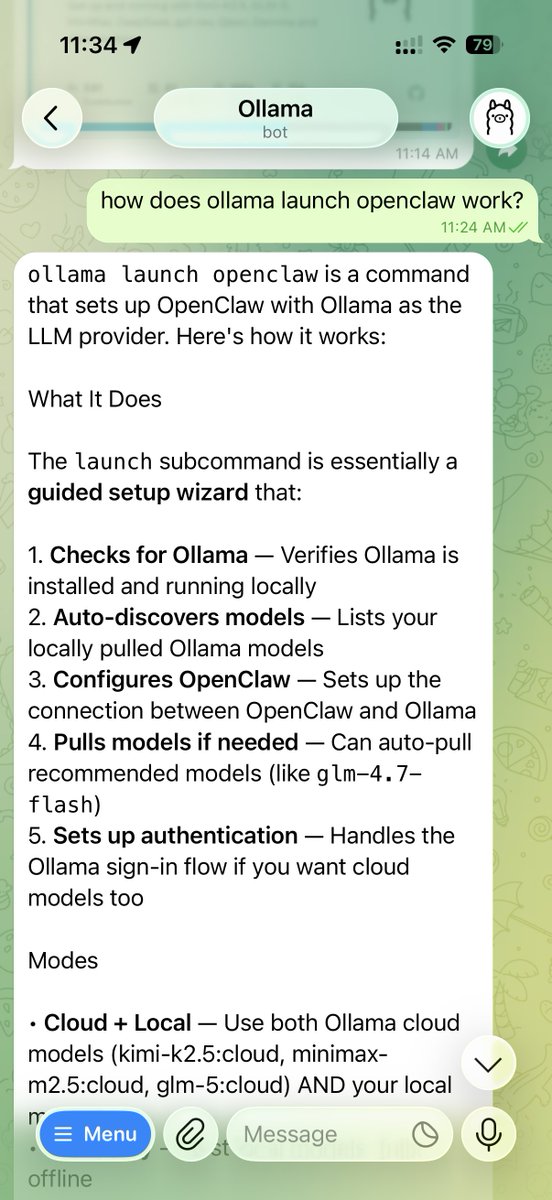

The release also adds a headless path for ollama launch. Ollama's headless mode frames it around unattended runs in Docker, CI/CD, and scripts, including evals, prompt validation, code review, and security-check jobs. The example command passes --yes to skip interactive confirmation and then forwards a prompt directly into the launched integration, which is the practical change for pipeline use.

Alongside the release, Ollama added nemotron-3-nano:4b as a runnable model and explicitly paired it with Pi via ollama launch pi --model nemotron-3-nano:4b. In Ollama's launch example, Pi is described as "the minimal agent runtime that powers OpenClaw," which ties the new headless launch flow to a smaller-footprint agent stack.

The Pi documentation describes manual and quick-start configuration around providers, models, and plugin support, which makes it the lightweight orchestration layer here. The linked model page adds why Ollama is emphasizing this pairing: Nemotron 3 Nano 4B is positioned as an efficient, agent-focused model variant, with the release post calling it suitable for "constrained hardware." Together, that gives Ollama a clearer unattended path: lightweight runtime, small model, and a launch command that can now run without a human in the loop.

Ollama 0.18.1 is here! 🌐 Web search and fetch in OpenClaw Ollama now ships with web search and web fetch plugin for OpenClaw. This allows Ollama's models (local or cloud) to search the web for the latest content and news. This also allows OpenClaw with Ollama to be able to Show more

Model: ollama.com/library/nemotr… Pi documentation: docs.ollama.com/integrations/pi