Zai launched GLM-5V-Turbo, a multimodal coding model for images, video, drafts, and layouts that is now on Z.ai, OpenRouter, AI Gateway, and Vision Arena. Teams building design-to-code or GUI flows can use one model instead of splitting vision and coding tasks.

The useful reveals are pretty concrete: the official docs say GLM-5V-Turbo supports 200K context, 128K max output, function calling, cache support, and optional deep thinking; Vercel's changelog shows the exact zai/glm-5v-turbo model ID for screenshot-to-code flows; and the benchmark screenshots show a more mixed picture than the launch copy, with standout wins in AndroidWorld and multimodal search, but not a clean sweep over Claude on every coding or GUI task benchmark screenshot.

The real story here is product shape, not just another vision model. Z.ai is packaging GLM-5V-Turbo as a coding base model that can see the environment, reason over it, and act through tools. The official docs describe the target loop clearly: image, video, text, and file inputs, then planning, coding, and action execution inside agent workflows.

Z.ai says four things power that loop System upgrades:

That stack matters because many current coding agents still bolt vision on as a helper model. GLM-5V-Turbo is trying to collapse perception and code generation into one model boundary.

The benchmark slate points to a model that is strongest when a task mixes pixels, search, and action. In the launch screenshots, GLM-5V-Turbo leads Design2Code at 94.8, BrowseComp-VL at 51.9, MMSearch at 72.9, MMSearch-Plus at 30.0, AndroidWorld at 75.7, and WebVoyager at 88.5 Multimodal benchmark table.

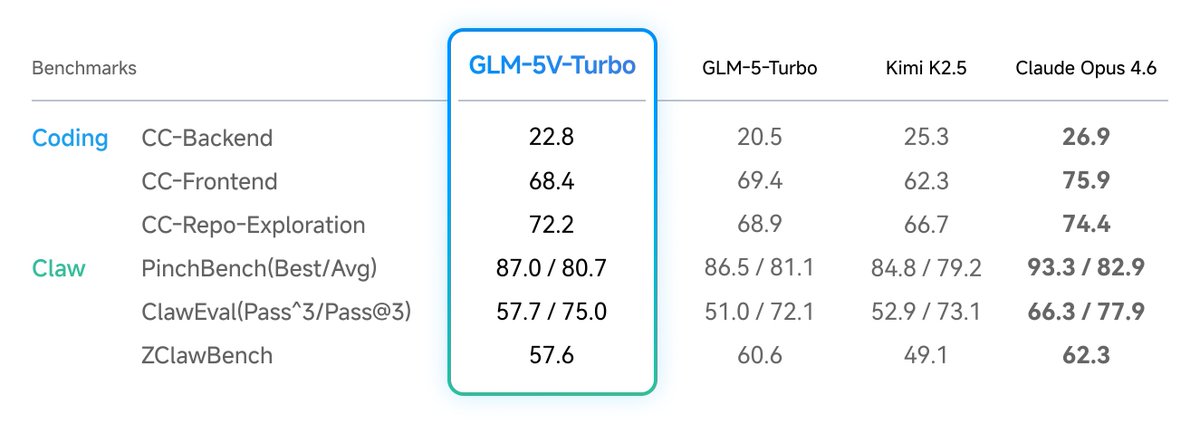

It is less dominant on tasks where Claude has already built a reputation. Claude Opus 4.6 still leads Flame-VLM-Code, Vision2Web, OSWorld, several text coding rows, and most of the Claw-style benchmarks shown in the other table text and Claw table. So the launch claim is directionally strong, but engineers should read it as "good enough to unify more workflows," not "best model on every row."

One of the more important launch details is what did not break. Z.ai explicitly argues that adding visual capabilities did not drag down pure text coding Pure text coding claim, and the table mostly supports that argument.

GLM-5V-Turbo scores 22.8 on CC-Backend, 68.4 on CC-Frontend, and 72.2 on CC-Repo-Exploration. Those are slightly better than GLM-5-Turbo on backend and repo exploration, slightly worse on frontend, and broadly in the same band rather than showing a multimodal tax Pure text coding claim. Claude still leads those rows, but that is not the key point. The key point is that a vision-first coding model stayed credible enough on plain coding work to be a default model for teams that move between screenshots, repos, and GUIs all day.

Day-one availability is unusually broad for a specialist coding model. Z.ai shipped GLM-5V-Turbo in its own chat product Z.ai chat availability, OpenRouter listed it with 202,752 context and pricing at $1.20 per million input tokens and $4 per million output tokens OpenRouter listing, and Vercel added it to AI Gateway with the model string zai/glm-5v-turbo Vercel AI Gateway.

A few practical details from the source pages:

That breadth lowers the integration barrier. Teams can test the same model in a consumer chat UI, a benchmarking arena, a routing layer, or a production SDK without waiting for a second rollout.

The cleanest use case is replacing a two model pipeline. If your current stack uses one model to parse screenshots and another to write code or drive a GUI agent, GLM-5V-Turbo is trying to absorb both jobs.

The evidence supports three concrete workflows:

That does not mean every team should switch immediately. If your workload is mostly backend coding with no GUI or screenshot context, the benchmark tables still suggest Claude-class coding models remain stronger in several pure coding rows text and Claw table. But if your engineering process already crosses Figma, browser screenshots, mobile UI states, and codebases, GLM-5V-Turbo looks like one of the clearest attempts yet to make that whole loop live in a single model.

Introducing GLM-5V-Turbo: Vision Coding Model - Native Multimodal Coding: Natively understands multimodal inputs including images, videos, design drafts, and document layouts. - Balanced Visual and Programming Capabilities: Achieves leading performance across core benchmarks for Show more

The leading performance of GLM-5V-Turbo stems from systematic upgrades across four levels: Native Multimodal Fusion: Deep fusion of text and vision begins at pre-training, with multimodal collaborative optimization during post-training. We developed the next-generation CogViT Show more

Zai has released GLM-5V-Turbo, a model designed to combine visual understanding with code generation. It natively processes images, videos, design drafts, and document layouts, and can generate runnable code from screenshots and web interfaces. According to Zai, the model leads Show more

Introducing GLM-5V-Turbo: Vision Coding Model - Native Multimodal Coding: Natively understands multimodal inputs including images, videos, design drafts, and document layouts. - Balanced Visual and Programming Capabilities: Achieves leading performance across core benchmarks for

Regarding pure-text coding, GLM-5V-Turbo maintains stable performance across three core benchmarks of CC-Bench-V2 (Backend, Frontend, and Repo Exploration), proving that the introduction of visual capabilities does not degrade text-based reasoning.

GLM 5V Turbo is now on AI Gateway. A vision-first coding model that sees your designs, screenshots, and GUIs to write and debug code. Use 𝚖𝚘𝚍𝚎𝚕: '𝚣𝚊𝚒/𝚐𝚕𝚖-𝟻𝚟-𝚝𝚞𝚛𝚋𝚘' to get started. vercel.com/changelog/glm-…

GLM-5V-Turbo is now live in Vision Arena. Test its ability to reason over visual inputs using your real-world prompts. Don't forget to vote so we can see how it stacks up.

Introducing GLM-5V-Turbo: Vision Coding Model - Native Multimodal Coding: Natively understands multimodal inputs including images, videos, design drafts, and document layouts. - Balanced Visual and Programming Capabilities: Achieves leading performance across core benchmarks for

GLM 5V Turbo is now on AI Gateway. A vision-first coding model that sees your designs, screenshots, and GUIs to write and debug code. Use 𝚖𝚘𝚍𝚎𝚕: '𝚣𝚊𝚒/𝚐𝚕𝚖-𝟻𝚟-𝚝𝚞𝚛𝚋𝚘' to get started. vercel.com/changelog/glm-…