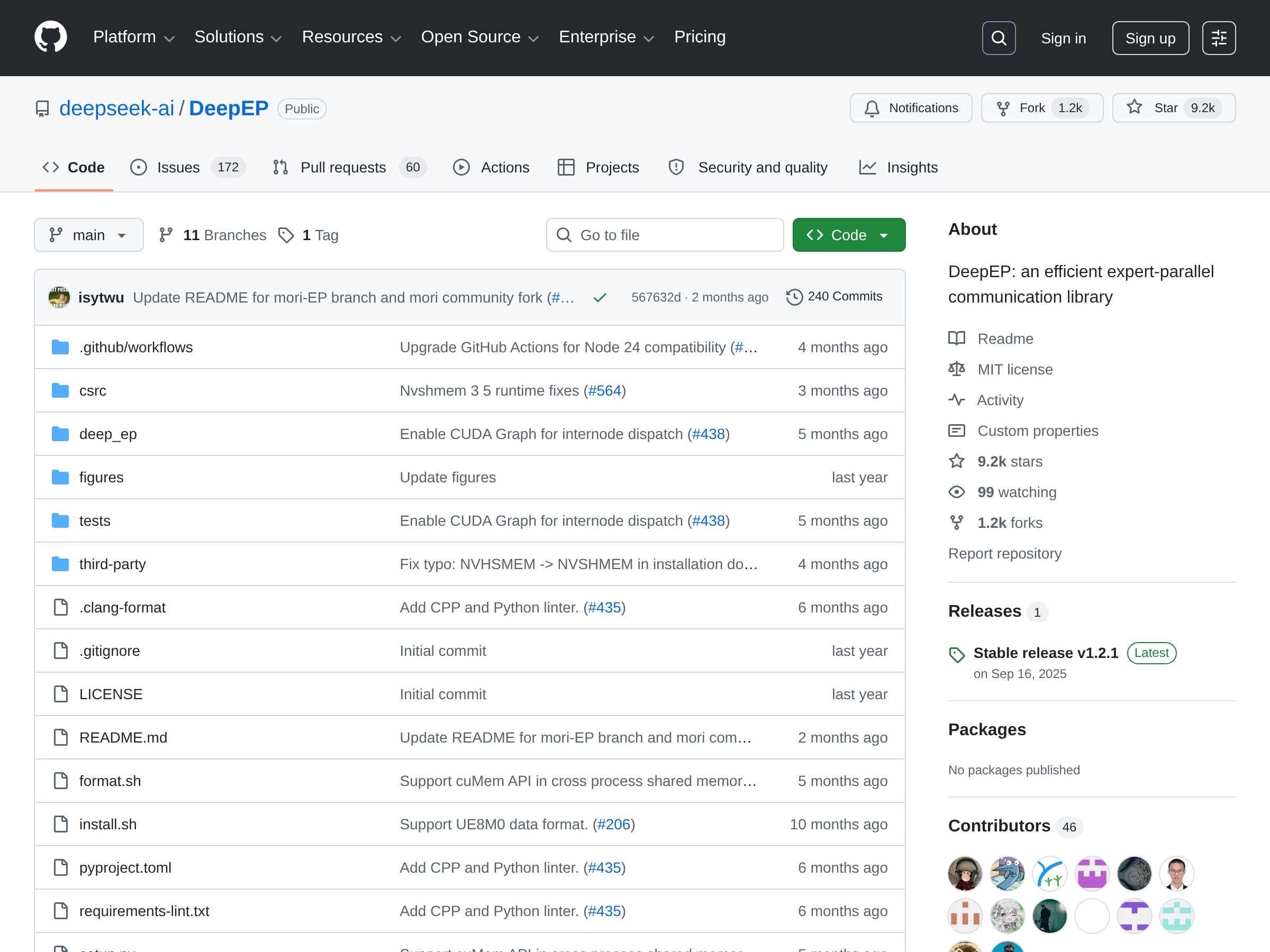

DeepEP

An efficient expert-parallel communication library

Open-source communication library for Mixture-of-Experts (MoE) and expert parallelism (EP), providing high-throughput, low-latency all-to-all GPU kernels for dispatch and combine operations.

Recent stories

0 linked stories

No linked stories yet.