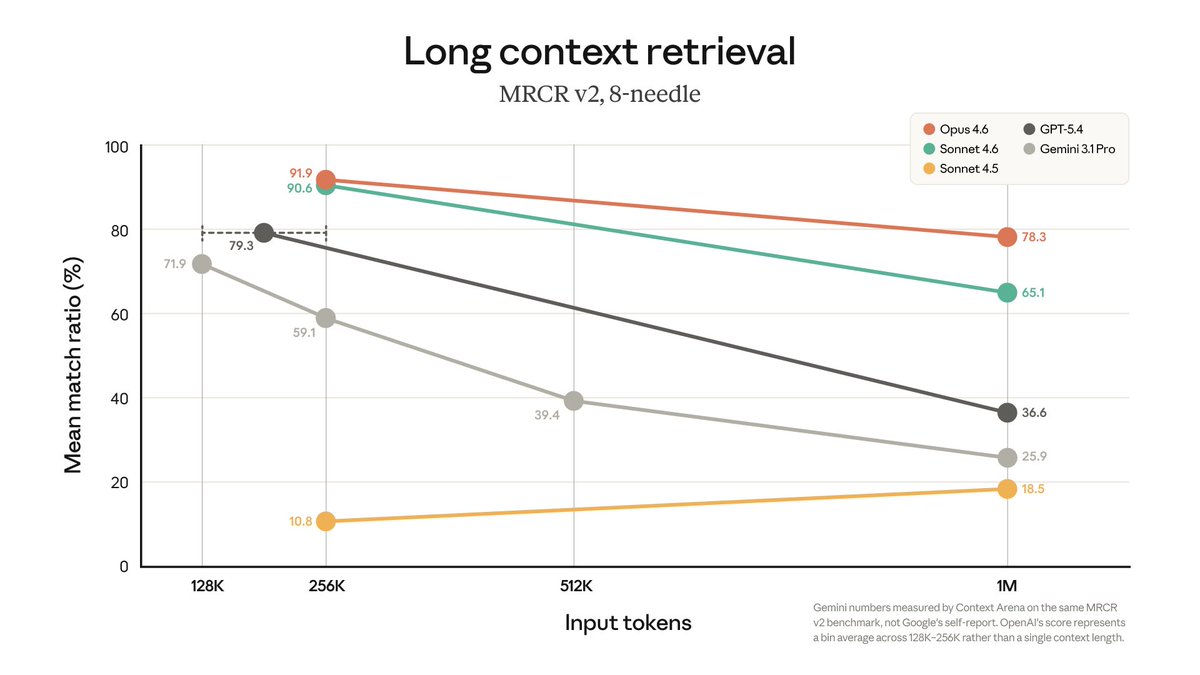

Third-party MRCR v2 results put Claude Opus 4.6 at a 78.3% match ratio at 1M tokens, ahead of Sonnet 4.6, GPT-5.4, and Gemini 3.1 Pro. If you are testing long-context agents, measure retrieval quality and task completion, not just advertised context window size.

Anthropic has moved 1M-token context from preview status into general availability for Claude Opus 4.6 and Claude Sonnet 4.6. In the same announcement thread, the rollout post says the models can ingest up to 600 images, which expands the practical input budget beyond text-heavy agent runs.

The rollout is already showing up in developer tooling. In a Claude Code screenshot, Opus 4.6 appears as "Opus 4.6 (1M context) · Claude Max," while a supporting roundup also describes the 1M window as generally available for both 4.6 models. That matters operationally because it turns long-context testing into something engineers can actually run inside coding workflows rather than a limited-access benchmark claim.

The strongest signal in the evidence is not the 1M number but the retrieval curve. The MRCR v2 chart shows Opus 4.6 at 91.9% mean match ratio at 256K tokens and 78.3% at 1M, while Sonnet 4.6 drops to 65.1%, GPT-5.4 to 36.6%, and Gemini 3.1 Pro to 25.9%. The same chart notes Gemini's numbers were measured by Context Arena on the same benchmark rather than using vendor self-report, and OpenAI's score is a "bin average" across 128K-256K rather than a single fixed context length.

That distinction is why retrieval quality matters more than raw advertised window size. As one reply puts it, "If you can't get accurate retrieval," the benefit of a larger window is limited. The supporting comparison post also calls out GPT-5.4 as a "regression" on long-context behavior relative to an earlier OpenAI chart at 256K, reinforcing that context-window expansion does not automatically preserve recall.

The most concrete field report here is a side-by-side CLI comparison. In that screenshot, Gemini CLI warns that sending a message "might exceed the context window limit," while Claude Code continues processing with Opus 4.6 at 1M context. The author claims Claude handled a larger project prompt that Gemini would not submit, which is anecdotal, but the screenshot does show a real difference in tool behavior under large inputs.

For code agents, that changes the failure mode. Instead of deciding which repo slice, docs subset, or prior conversation chunk to drop, teams can keep more of the working set in one session. Another practitioner post frames it as "entire codebases" and "complete conversation history" staying in context for complex refactors. Independent eval work is still catching up, though: one evaluator said his team hit "a couple speed bumps" while trying to get Opus 1M working in their own test setup.

This is huge, literally. 1M context is now generally available for Claude Sonnet/Opus 4.6 and it can ingest 600 images. So it can "watch" a video - take a video and split out the frames then upload. Not sure how big the images can be, probably small to leave room for a convo. Show more

Claude Opus 4.6 with 1M context in Claude Code is a massive upgrade. 5x the context window we had before yesterday's update. Entire codebases. Full documentation. Complete conversation history. All in context. No more truncation. No more lost context mid-session. This changes Show more

Anthropic consistently manages to impress, particularly with its ability to maintain attention and reasoning across extended contexts. Even outperforming ex-context-king Google, by a lot.

Opus 4.6 is by far the best 1 million token context model out there at 78% accuracy at 1 mil toks. The only model that’s even close is Sonnet 4.6. GPT-5.4 is also a regression in long context compared to GPT-5.2 at 256k.

Claude Opus 4.6 with 1M context is a game changer. I dropped in 2.1 million tokens into Gemini CLI. It refused to submit.Same prompt into Claude. No problem. Already working. That's the difference. Claude just handles it.