FlashAttention-4 targets Blackwell bottlenecks with redesigned pipelines, software-emulated exponential work, and lower shared-memory traffic, reaching up to 1613 TFLOPs/s on B200. If you serve long-context models on B200 or GB200, benchmark it against your current cuDNN and Triton kernels before optimizing elsewhere.

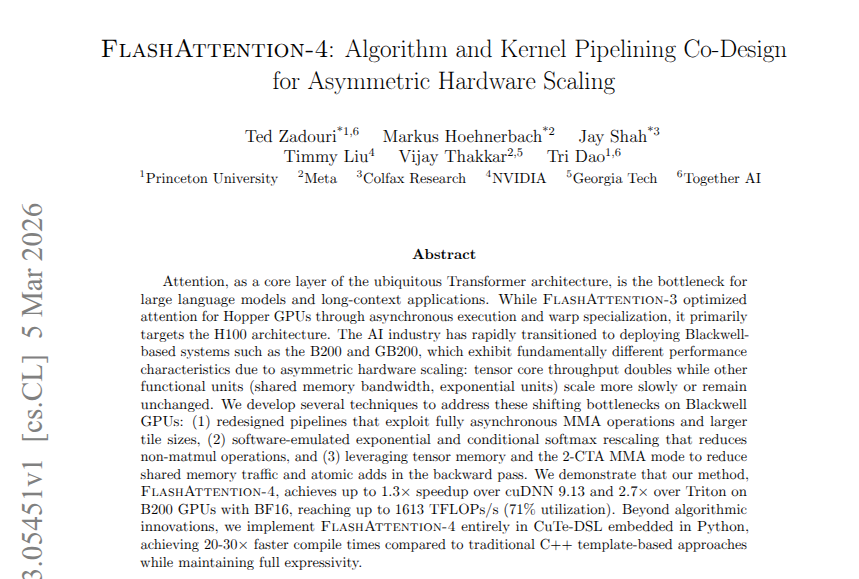

FlashAttention-4 is not pitched as a generic attention refresh. It is a Blackwell-specific response to “asymmetric hardware scaling,” where matrix math got much faster but memory movement and non-matmul units did not keep up, as the abstract screenshot spells out. That shifts the bottleneck away from pure compute and toward shared-memory traffic, softmax, and other non-matmul operations.

The paper summary in the thread says the kernel attacks that bottleneck three ways: overlapping math and memory loading with a new asynchronous schedule, moving some exponential work into software-emulated paths, and using tensor memory plus 2-CTA MMA mode to cut shared-memory traffic and atomic adds in backward pass. The same paper thread says those changes push B200 to 1600+ TFLOPs/s, ahead of both cuDNN and Triton on the reported setup.

One implementation detail stands out beyond the speedup number. The abstract screenshot says the whole kernel was written in CuTe-DSL embedded in Python, with 20-30x faster compile times than C++ template-based implementations while keeping full expressivity. For engineers tuning long-context inference or training on B200 and GB200, that makes this story about iteration speed as much as raw throughput.

The paper introduces FlashAttention-4 to make AI run faster on the newest generation of computer chips. Researchers from Princeton University, Meta, NVIDIA, and more have developed clever new pipelines, re-engineered core computations, and optimized memory usage to master the Show more