Mixedbread introduced Wholembed v3 as a retrieval model for text, image, video, audio, and multilingual search. Benchmark it on fine-grained retrieval tasks if single-vector embeddings have been collapsing in your pipeline.

Wholembed v3 is being introduced by Mixedbread as a state-of-the-art retrieval model “across all modalities and 100+ languages,” according to the launch wording. The product framing matters for engineers because the claim is not just higher text embedding quality; it is one model for text, image, video, audio, and multilingual search workloads.

The release is also being presented as an omnimodal retrieval system built from “a million different moving parts,” in the words of a team reply, with Mixedbread’s team saying they are “fairly confident it’s the best multimodal model that exists.” Public access details are still thin in this evidence set, but the academic-access post says the company is willing to support research projects even before a formal program exists.

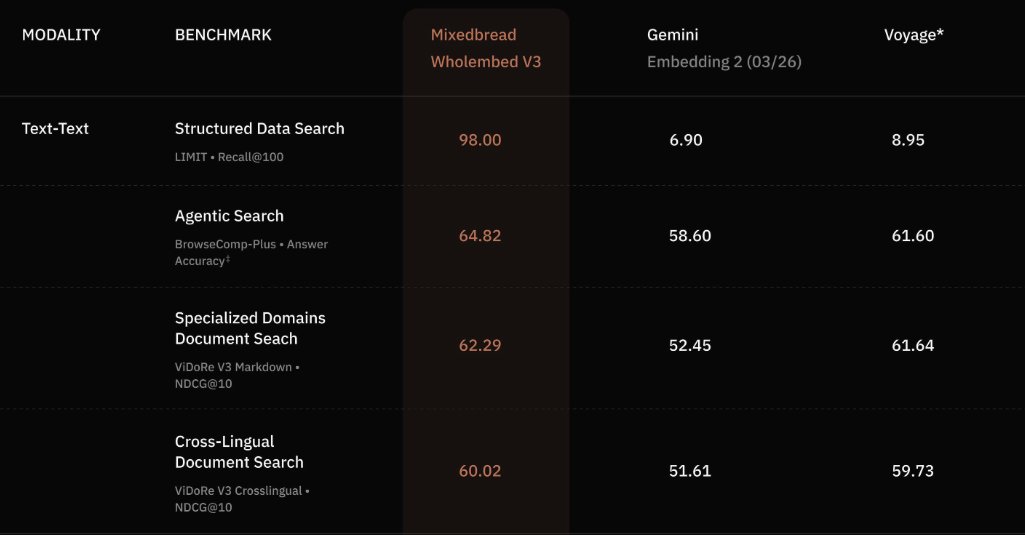

The public benchmark image shared in the main reaction post shows unusually large gaps on retrieval-heavy tasks. On Structured Data Search with LIMIT and Recall@100, Wholembed v3 posts 98.00, while Gemini Embedding 2 scores 6.90 and Voyage 8.95; on BrowseComp-Plus Agentic Search it scores 64.82 versus 58.60 and 61.60; on ViDoRe V3 Markdown it reaches 62.29 versus 52.45 and 61.64; and on ViDoRe V3 Crosslingual it lands at 60.02 versus 51.61 and 59.73

.

The biggest caveat is that at least one of those tests is explicitly adversarial to standard embeddings. Mixedbread’s Benjamin Clavié says in a follow-up reply that the structured-search benchmark is “designed to test specific breaking cases of embedding model,” making it “very much an all of nothing situation.” That does not negate the result; it narrows its interpretation. If your pipeline fails on fine-grained fields, tables, or other compositional retrieval, the cropped benchmark image suggests Wholembed v3 is aimed directly at those failure modes.

The practical argument from practitioners is less about headline leaderboard wins than about generalization. Antoine Chaffin writes in his thread that multi-vector models “crush benches” but, more importantly, “generalize very well” and make “a huge difference in production.” Another post from the same thread says he is seeing “random SOTAs on new domain” with older models, implying the new release may matter even more off-benchmark than on benchmark domain-generalization reply.

That reading lines up with how retrieval specialists are reacting. A separate practitioner post in the late-interaction reaction calls Mixedbread “world-leading experts in late interaction retrieval” and says late interaction done well can make common embedding models “look like they don’t work.” For engineers, the immediate takeaway is not that single-vector embeddings are obsolete everywhere; it is that workloads with brittle matching, multilingual retrieval, or multimodal search now have a new model claiming large gains, and the strongest evidence so far points to late-interaction style retrieval as the reason.

Introducing Mixedbread Wholembed v3, our new SOTA retrieval model across all modalities and 100+ languages. Wholembed v3 brings best-in-class search to text, audio, images, PDFs, videos... You can now get the best retrieval performance on your data, no matter its format.

By the way, I’ve had some questions about it: we are more than happy to support academic access for research projects. We have nothing formal in place yet but feel free to reach out to me directly for a bit.

Introducing Mixedbread Wholembed v3, our new SOTA retrieval model across all modalities and 100+ languages. Wholembed v3 brings best-in-class search to text, audio, images, PDFs, videos... You can now get the best retrieval performance on your data, no matter its format.

I've been eagerly awaiting this release from the @mixedbreadai folks. They're world-leading experts in late interaction retrieval. And today they remind us that late interaction done well makes all your favorite embedding models look like they don't work.

Introducing Mixedbread Wholembed v3, our new SOTA retrieval model across all modalities and 100+ languages. Wholembed v3 brings best-in-class search to text, audio, images, PDFs, videos... You can now get the best retrieval performance on your data, no matter its format.

This jump is just crazy

And I think it should be even more impressive in practice Multi-vector models crush benches, yes But most importantly, it does generalize very well, and it makes such an huge difference in production

Anyone needed a proof that multi-vector is going to win? Please have a look at what it looks like when a cracked team try it hard An omni model that actually **crushes** anything that exists, on any modality, on any domain Congratulations to the team, this is truly impressive

Introducing Mixedbread Wholembed v3, our new SOTA retrieval model across all modalities and 100+ languages. Wholembed v3 brings best-in-class search to text, audio, images, PDFs, videos... You can now get the best retrieval performance on your data, no matter its format.