Harbor opened dataset publication to any user, with public and private visibility options and a registry that already exposes tens of thousands of tasks. The update makes benchmark and eval datasets easier to share, rerun, and standardize across teams using the same format.

The change is simple but material for teams that publish evals: Harbor now lets any user publish a dataset into the registry, instead of treating the registry as a mostly curated destination. In Harbor's launch thread, Alex Shaw describes the upgrade as making a dataset "available to every Harbor user," while the linked registry announcement frames the product as a distribution layer for Harbor tasks and datasets.

That matters because Harbor is standardizing both the packaging and the handoff. Shaw's format overview says the Harbor format already makes it easy to share data; the new registry adds a discoverable place where other teams can actually fetch and run the same artifact. Harbor also added access control rather than making publication synonymous with public release: the privacy update says datasets can be public or private, with private visibility scoped to an organization.

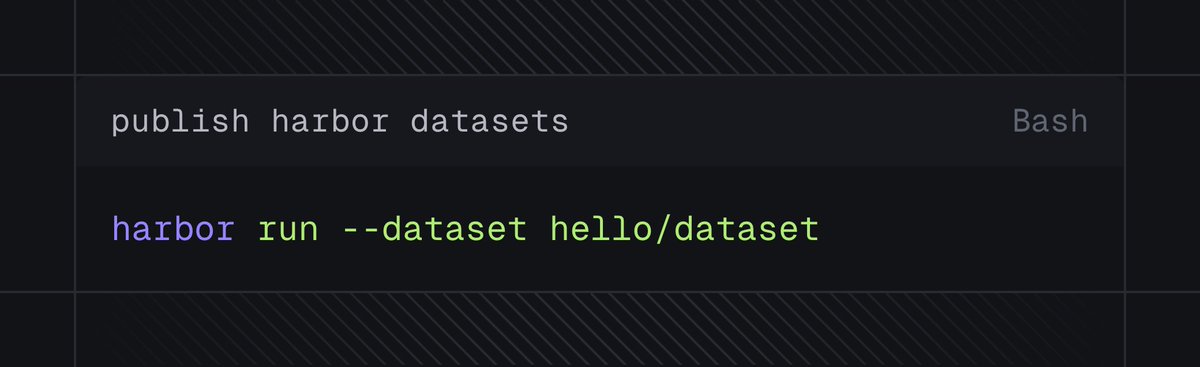

The workflow is CLI-first. The publish screenshot in the launch post shows a minimal path: initialize a dataset, add tasks, then run harbor publish [img:1|publish commands]. The linked announcement adds the surrounding steps, including authentication and the ability to run published tasks or datasets back through the Harbor CLI.

Harbor is also emphasizing reproducibility over ad hoc sharing. The announcement says tasks are identified by digest, revision number, and optional tags, and the registry already exposes enough content to be useful immediately: the registry snapshot shows 74 datasets and 37,318 tasks. A follow-up example in SWE-Atlas example shows Scale AI's SWE-Atlas Test Writing and Codebase QnA datasets already published in Harbor format and runnable with harbor run -d, which is the clearest sign yet that Harbor wants benchmark exchange to feel closer to package distribution than to bespoke repo setup.

The Harbor registry is getting an upgrade. Now, anyone can publish to the registry to make their dataset available to every Harbor user:

Browse the 74 datasets and 37,318 tasks already registered. registry.harborframework.com

Measure how well your agent writes unit tests using SWE-Atlas Test Writing from @scale_AI. SWE-Atlas Test Writing and SWE-Atlas Codebase QnA both ship in the Harbor format and are available on the Harbor registry.

Today we’re releasing Test Writing, the second benchmark in the SWE Atlas evaluation suite for coding agents. This benchmark measures a model’s ability to write tests through multi-step, professional-grade evaluation. Frontier models score less than 45%. As coding agents