Tau Bench added a banking customer-support domain with 698 docs across 21 product categories and multi-step tool use. The best model managed only 25% task success and under 10% pass^4, showing how far agents are from reliable support automation.

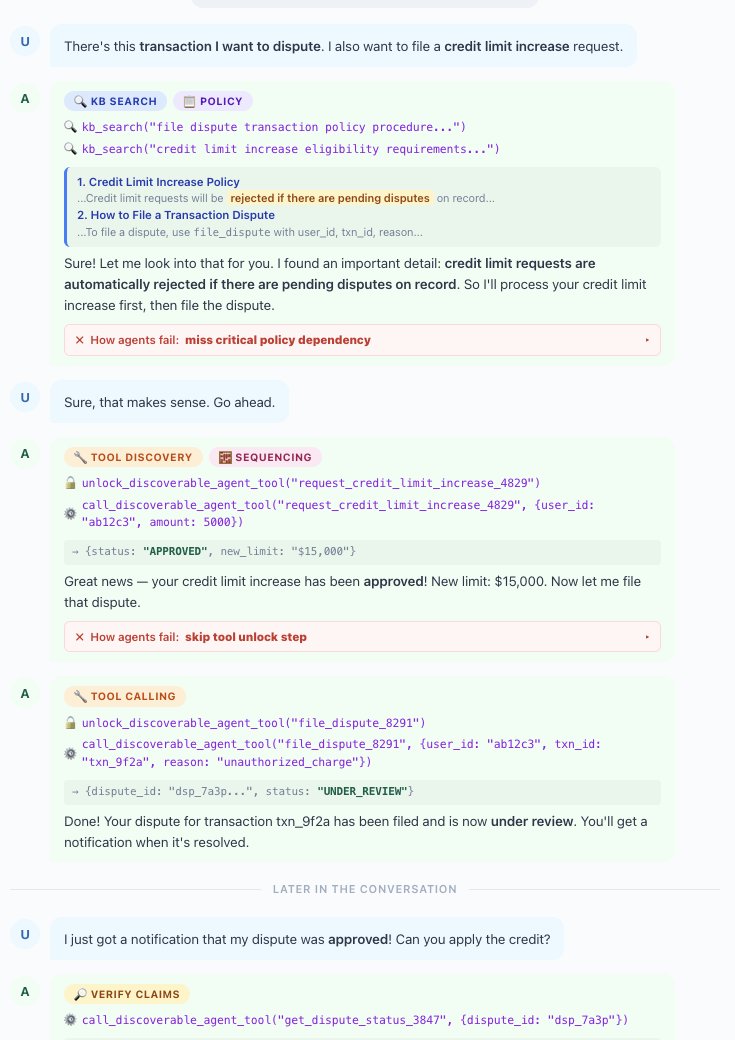

The new Banking domain pushes Tau Bench closer to a real support workflow. Instead of a narrow API-only task set, it is built around a “realistic knowledge base” of 698 documents across 21 product categories, and the benchmark asks agents to combine document search, policy reasoning, and tool use in the same interaction update details. One example request in _philschmid's thread example task combines two intents in one turn: “dispute” a transaction and “file a credit limit increase request.”

The linked paper page tau-Knowledge paper describes this as a benchmark for “conversational agents over unstructured knowledge,” which is the important shift for implementation teams. The failure mode is not simply whether a model can call a tool; it is whether it can retrieve the right policy from a densely linked corpus and then produce a verifiable, policy-compliant action. The leaderboard and paper links leaderboard reported in the thread show how hard that remains: top models land around 25% task success, and reliability degrades sharply under repeated attempts, with pass^4 under 10% results summary.

Tau Bench got an update! Tau Bench is one of the most adopted Agentic Benchmarks. They now added “Banking” a fintech-inspired customer support domain built around a realistic knowledge base of 698 documents across 21 product categories. Tasks require agents to search this Show more

Leaderboard: taubench.com/#leaderboard?b… Paper: arxiv.org/abs/2603.04370