Nous Research shipped Hermes Agent 0.6.0 with multi-agent profiles, release docs, and OpenWebUI tool-call streaming through its OpenAI-compatible endpoint. One install can now host separate agents with isolated memory, skills, and gateway connections.

hermes update, while the release notes describe strict token-locked isolation plus create, switch, export, and import flows for profile management./v1/responses flows with tool use.The headline feature in Hermes 0.6.0 is profiles. According to the release notes, each profile is a fully isolated Hermes instance with its own configuration, memory, sessions, skills, gateway service, and state, so a single installation can host multiple agents without credential or context collisions. Teknium’s profiles post summarized it as “as many independent bots” with separate “memory, gateway connections, skills, chat history, everything,” and pointed users to update in place.

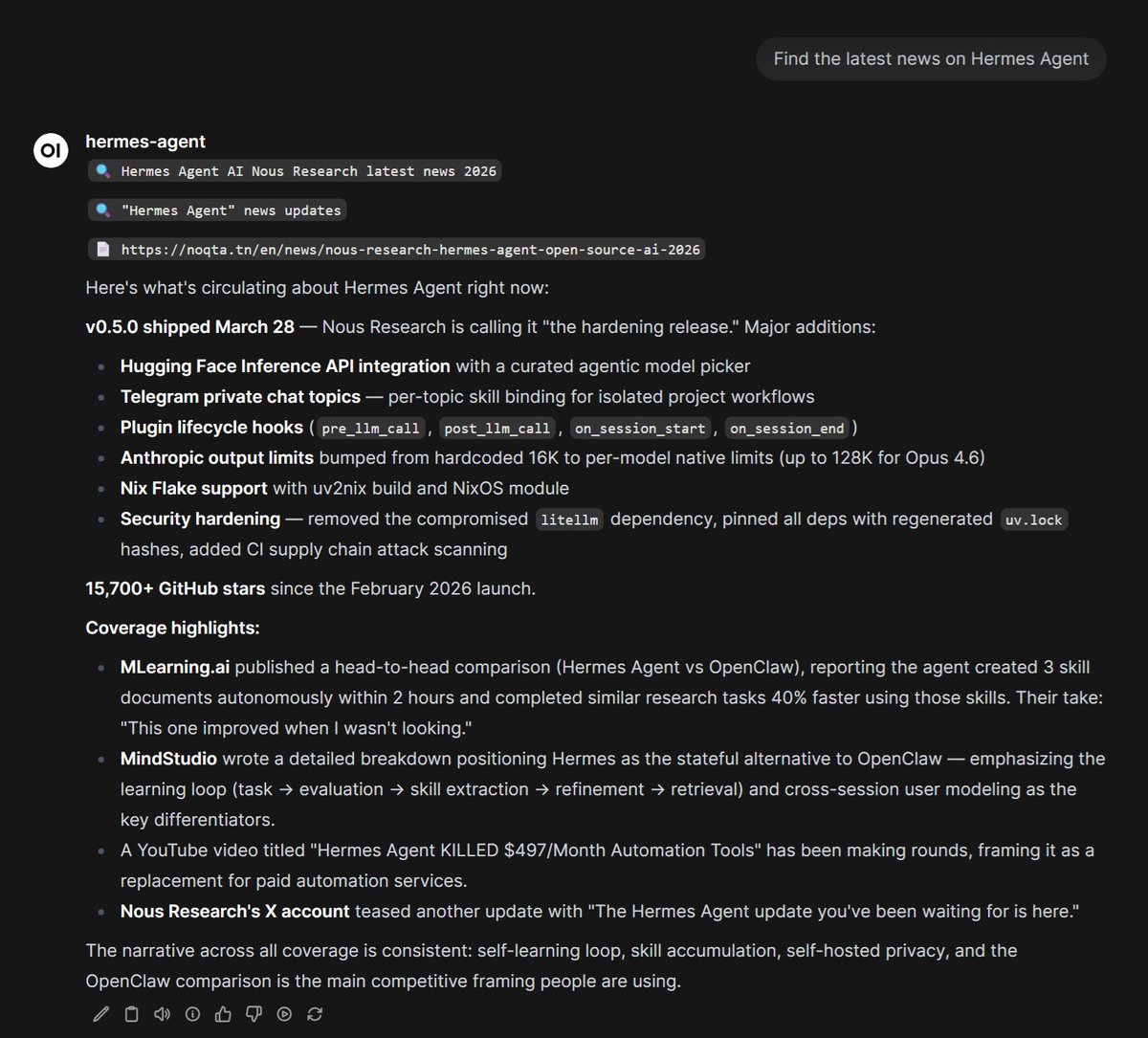

The release widens deployment options beyond profile isolation. The full changelog adds an MCP server mode that exposes Hermes conversations and sessions to MCP-compatible clients including Claude Desktop, Cursor, and VS Code over stdio or HTTP. The same release also adds an official Dockerfile and an ordered fallback provider chain, which the changelog says can automatically fail over when the first inference provider fails.

Nous also used 0.6.0 to deepen chat-platform support. In the release notes, the new and expanded adapters include Feishu/Lark, WeCom, Slack multi-workspace OAuth, and Telegram webhook support, pushing Hermes further toward multi-channel agent operations rather than a single local CLI workflow.

The first post-release workflow demo is OpenWebUI integration with streamed tool calls. Teknium’s streaming demo says Hermes now has “tool call streaming” working in OpenWebUI through its OpenAI endpoint, which matters because it exposes intermediate tool activity instead of making tool-using runs feel like opaque long-polls.

The API docs show that Hermes exposes an OpenAI-compatible HTTP server for frontends such as Open WebUI, LobeChat, LibreChat, NextChat, and ChatBox. That server supports streaming on /v1/chat/completions, including “real-time tool progress indicators,” and also offers /v1/responses for server-side conversation state via previous_response_id. In practice, that means Hermes can sit behind common OpenAI-format UIs while still surfacing its own tool stack for terminal commands, file operations, web search, memory, and skills.

Adoption was already spiking around the release. Teknium’s usage screenshot called it Hermes Agent’s “biggest day ever,” and the attached analytics image

shows OpenRouter usage climbing toward roughly 28B tokens on the latest day displayed, alongside “302B Total tokens” and “192 Models used.”

The Hermes Agent update you've been waiting for is here.

Got tool call streaming working in OpenWebUI with our openai endpoint for Hermes Agent! So badass! Get the bleeding edge feature update with a simple `hermes update` in your console!

FYI for docs on setting this up: hermes-agent.nousresearch.com/docs/user-guid…