Nous Research shipped Hermes Agent v0.6.0 with multi-agent profiles and documented tool-call streaming for OpenWebUI via its OpenAI-compatible endpoint. The release makes separate bots, memory, and integrations persistent enough for longer-running personal-agent setups.

The real story here is that Hermes is getting much better at staying alive between sessions, interfaces, and channels. The official release notes frame v0.6.0 as the multi-instance release, the profiles guide shows how hard the isolation boundary is, and the API server docs make clear that Hermes is now designed to sit behind other clients. An outside take from Efficienist gets the ranking right: the old single-agent limit was the biggest product gap, and v0.6.0 closes it.

Profiles are the headline because they fix a structural problem. Before this, Hermes could be persistent, but it still wanted to be your one agent. Now each profile gets its own config.yaml, .env, SOUL.md, memories, sessions, skills, cron jobs, and state database, according to the profiles docs.

That means a coding agent, a personal assistant, and a research bot can share one install without sharing identity or memory. The token-lock detail matters most. The release notes say two profiles cannot grab the same bot credential, which is exactly the kind of guardrail multi-bot setups need once you start wiring Slack, Telegram, or other gateways into production-ish workflows.

The command model is also simple enough to stick. Create with hermes profile create, switch with hermes -p, and export or import profiles for reuse. That is a much cleaner mental model than bolting multi-agent behavior onto one mutable home directory.

MCP server mode is easy to miss because profiles grab the attention, but it may be the more strategic change. v0.6.0 adds hermes mcp serve, which exposes Hermes conversations and sessions to MCP-compatible clients over stdio or Streamable HTTP, per the release notes.

That shifts Hermes from “agent you run” toward “agent system other software can browse and steer.” The release describes browsing conversations, reading messages, searching sessions, and managing attachments through MCP. If you already live in Claude Desktop, Cursor, or VS Code, that is a cleaner integration path than forcing everything through Hermes' own UI surfaces.

For engineers, the important pattern is separation: Hermes keeps the memory, skills, and session state, while other clients become interchangeable control planes.

Teknium's OpenWebUI clip matters because it shows the API server doing more than basic compatibility. The API server docs say Hermes exposes an OpenAI-style /v1 endpoint, including POST /v1/chat/completions and POST /v1/responses, while keeping Hermes tools available behind the interface.

The key detail is streaming. The docs say tool progress indicators appear inline during streamed responses, and Teknium explicitly called out tool-call streaming in OpenWebUI. That makes the GUI story much better. Many “OpenAI-compatible” backends technically connect to chat frontends, but fall apart once tools enter the loop. Hermes looks closer to a drop-in agent backend for Open WebUI, LobeChat, LibreChat, and similar clients.

There is also a practical architecture benefit here:

chat/completions gives you stateless compatibility with existing OpenAI-style clients.responses gives you server-side conversation state.That mix is what makes the release feel more operational than cosmetic.

The rest of v0.6.0 reads like a project preparing for people to leave it running. The official changelog adds an official Docker container, ordered fallback provider chains, Slack multi-workspace OAuth, Telegram webhook mode, Feishu/Lark support, and WeCom support.

Those are not flashy features, but they are the features that decide whether an agent survives contact with real usage. Docker lowers the friction for VPS and homelab installs. Provider failover matters when one model endpoint starts erroring at 2 a.m. Multi-workspace Slack support and Telegram webhook mode are the kind of plumbing changes you only prioritize once people are actually trying to run one agent system across multiple teams and channels.

Teknium's usage screenshot is not proof of durability by itself, but it does show the release landing into meaningful demand OpenRouter usage snapshot. Combined with the emphasis on profiles, API compatibility, and gateway reliability, v0.6.0 looks like Hermes' clearest move yet from interesting personal agent to deployable personal-agent platform.

It's FINALLY HERE! Multi Agent Profiles so you can have as many independent bots with their own memory, gateway connections, skills, chat history, everything! To use: Run `hermes update` and look for multi agent profiles User Guide: hermes-agent.nousresearch.com/docs/user-guid… Reference: Show more

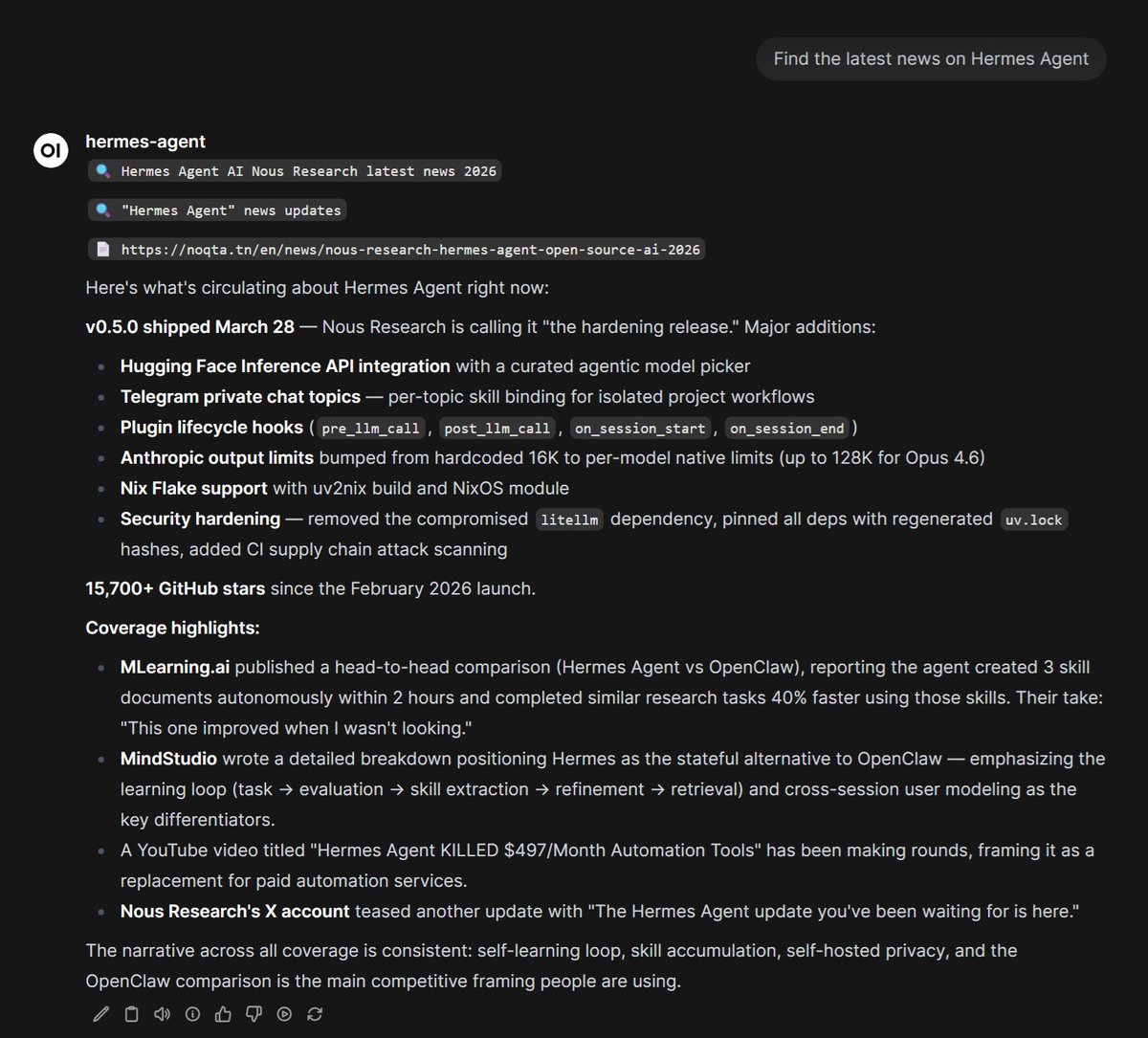

The Hermes Agent update you've been waiting for is here.

Full v0.6.0 changelog github.com/NousResearch/h…

Full v0.6.0 changelog github.com/NousResearch/h…

Got tool call streaming working in OpenWebUI with our openai endpoint for Hermes Agent! So badass! Get the bleeding edge feature update with a simple `hermes update` in your console!

FYI for docs on setting this up: hermes-agent.nousresearch.com/docs/user-guid…

The Hermes Agent update you've been waiting for is here.

Biggest day ever for Hermes Agent use! Thanks everyone!!!