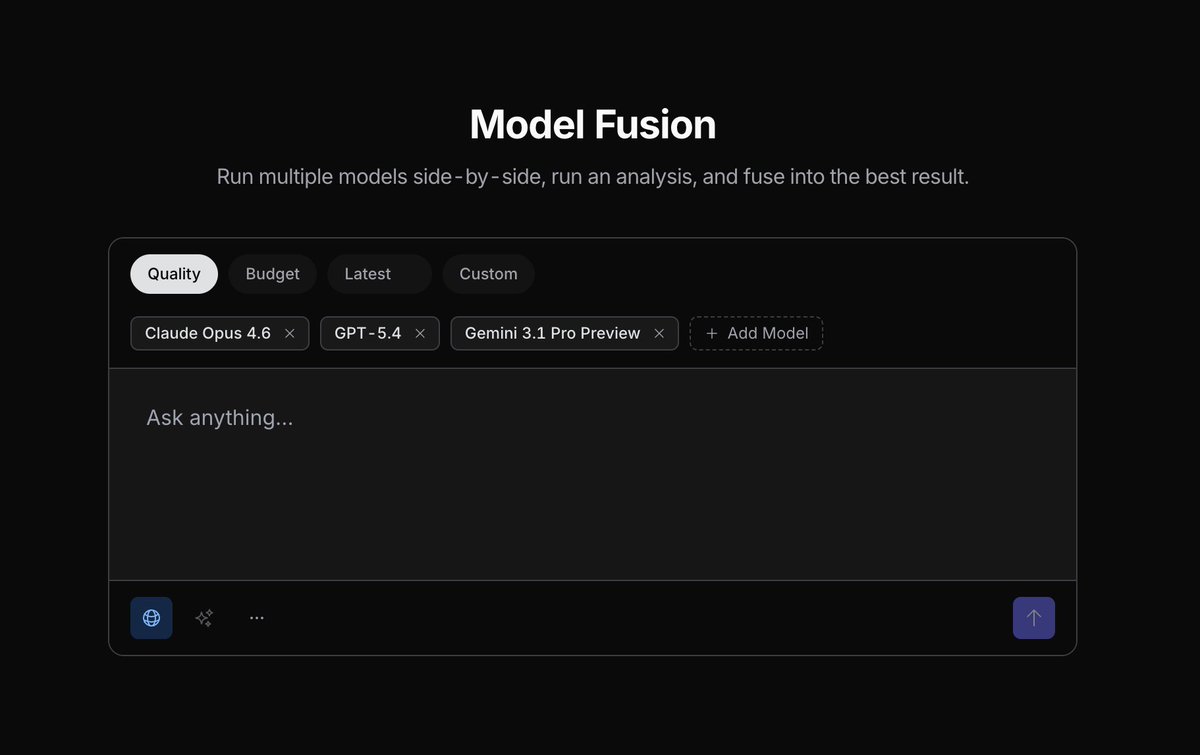

OpenRouter opened Model Fusion, a public experiment that compares multiple model outputs on configurable axes before a final judge selects or fuses the answer. The feature turns multi-model routing and LLM-as-judge into a reusable response pipeline instead of a manual comparison step.

Model Fusion packages a workflow many engineers already do by hand: prompt several frontier models, inspect the differences, then merge or choose the best answer. OpenRouter describes it as “use multiple models, analyze outputs, and fuse the results,” and says the experiment is public with “no subscription needed at all” launch post.

The technical change is the addition of a judge pipeline around those model calls. According to OpenRouter's analysis thread, a “pre-fuse judging step” compares outputs from different LLMs on configurable axes, and OpenRouter's follow-up post says those results are then “fed to a final judge, which you can customize.” That makes the product less like simple model routing and more like an exposed orchestration pattern: candidate generation, rubric-based analysis, then final ranking or fusion through the public lab interface.

A supporting reaction sharpened the use case rather than broadening it. In a repost highlighted by OpenRouter, Alex Atallah summarized the premise as “LLM neurodiversity really works for deep research tasks” supporting repost. Another practitioner reaction said they had been doing this manually “between opus and gpt for weeks,” which frames the launch as automation of an existing evaluation habit rather than a brand-new prompting trick user reaction.

New public experiment: Model Fusion Use multiple models, analyze outputs, and fuse the results for a response that every Deep Research agent preferred to its own, in our testing. No subscription needed at all.

These results are fed to a final judge, which you can customize. Try it out and give us feedback! openrouter.ai/labs/fusion