LangChain releases Deep Agents v0.4.11 as an MIT harness for Claude Code-style workflows

LangChain open-sourced Deep Agents v0.4.11, an MIT agent harness with planning, files, shell access, sub-agents, and auto-summarization. Study it if you want a readable template for building Claude Code-style tools on your own model stack.

TL;DR

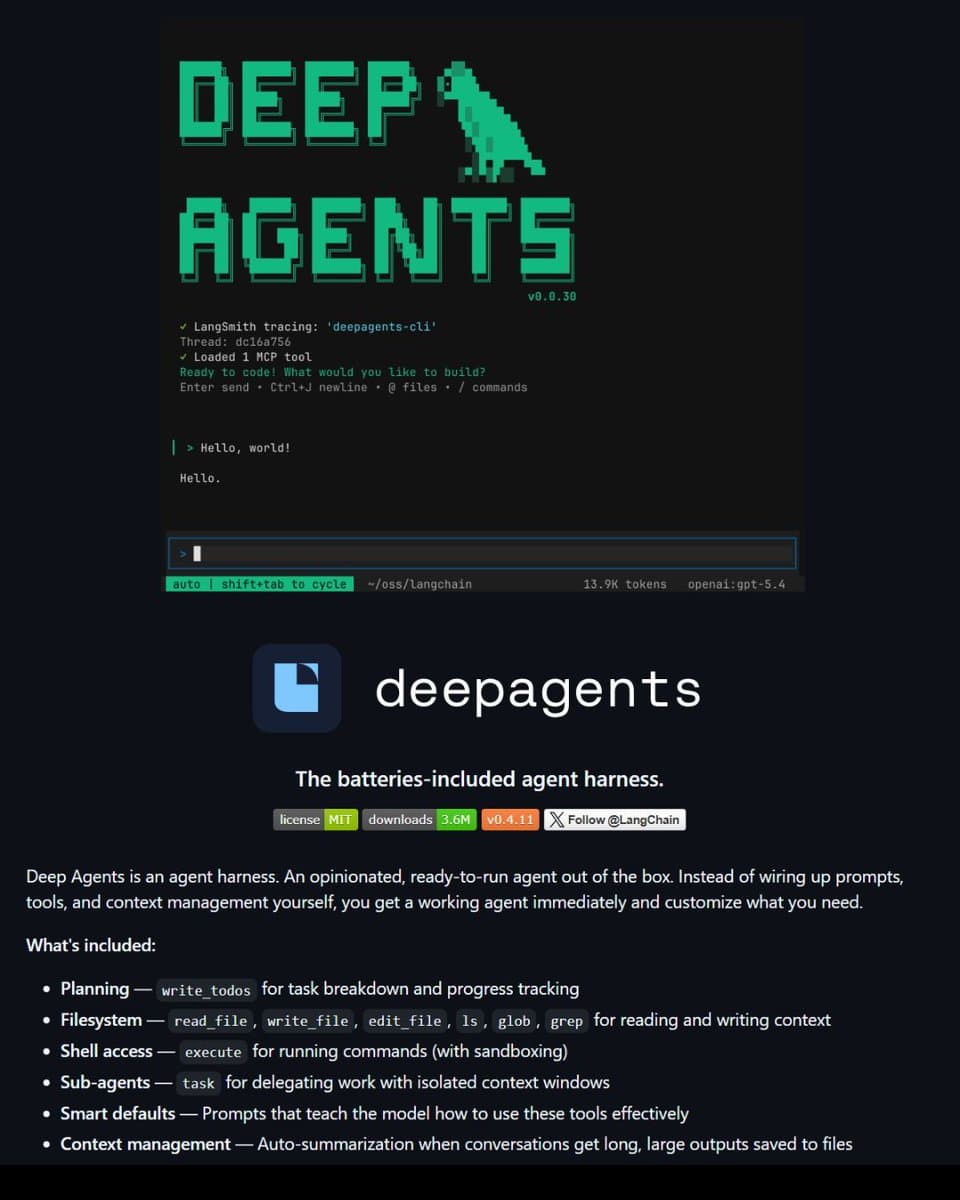

- LangChain has open-sourced Deep Agents as an MIT-licensed harness for Claude Code-style coding workflows, according to LangChain thread.

- The release bundles the core agent pieces in one stack: planning, file reads and edits, sandboxed shell commands, sub-agents, and auto-summarization when context gets long, as outlined in the launch post.

- Deep Agents is positioned as model-agnostic, with the repo link describing a setup you can inspect, swap models inside, and adapt rather than treat as a closed product.

- For creative coders building bespoke tools, the useful part is less “Claude Code clone” branding than the readable reference architecture shown in the feature breakdown.

What shipped

Deep Agents v0.4.11 is a batteries-included agent harness rather than a single demo script. In LangChain's post, the stack is described as including task planning, filesystem access for reading and writing code, sandboxed shell execution, delegated sub-agents, and automatic summarization when conversations run long. The repo card in

frames that as an opinionated agent you can run first and customize later.

The linked repository in the Deep Agents repo adds the practical angle: this is meant to be inspectable and modifiable, with the usual production-minded plumbing around agent workflows instead of a black-box interface.

Why creators may care

For designers, filmmakers, and musicians who build their own utilities, Deep Agents is useful as a template for multimodal-adjacent production tooling even though the release itself is code-first. The combination of planning, file operations, shell access, and isolated sub-agents maps cleanly to workflows like asset generation pipelines, batch file cleanup, render orchestration, or prompt-to-output automation.

The more important detail in the thread is that the harness is model-agnostic. That makes it easier to study the workflow separately from any one frontier model and adapt the same structure to a custom stack, which is the real creative takeaway here.