Claude Code users report a 5-minute cache TTL and 5x Pro Max quota burn in 1.5 hours

Anthropic acknowledged a March 6 cache optimization change, and Pro Max users report that the shorter TTL plus hidden session context now burns through Claude Code quota much faster. Watch for 500 errors and stalled streams, and apply the 2.1.105 patch if your UI hangs.

TL;DR

- A fast-rising GitHub bug report says one Pro Max 5x user burned through a reset window in 1.5 hours of mostly Q&A, and GitHub issue #46829 separately traces a prompt-cache TTL shift from 1 hour to 5 minutes in early March.

- According to the main HN discussion, installed skills and MCPs can quietly add hidden context on every turn, while fresh follow-up comments tie that overhead to the shorter cache window.

- ClaudeCodeLog's release thread and the official v2.1.105 release notes show Anthropic shipped fixes for stalled API streams, MCP startup races, marketplace/plugin breakage, and several TUI regressions on April 13.

- A separate high-signal regression report claims Claude Code quality dropped after February changes, backed by 6,852 session logs, while a Sunday outage report showed 500 errors and a follow-up post said the service came back minutes later.

- Anthropic engineer trq212 also pointed users to a new opt-in renderer, and the Fullscreen rendering docs describe a flicker-free alternate screen mode enabled with

CLAUDE_CODE_NO_FLICKER=1.

You can read the quota bug, the cache TTL regression thread, the v2.1.105 release notes, and the fullscreen renderer docs. The weird bit is how neatly the complaints line up: shorter cache lifetime, more hidden context from skills and MCPs, and a patch release that spends a lot of time fixing hangs, rendering glitches, and dropped tool state.

Cache TTL

[BUG] Pro Max 5x Quota Exhausted in 1.5 Hours Despite Moderate Usage · Issue #45756 · anthropics/claude-code

730 upvotes · 644 comments

Fresh discussion on Pro Max 5x quota exhausted in 1.5 hours despite moderate usage

730 upvotes · 644 comments

The quota story snapped into focus once users compared logs across March. In GitHub issue #46829, Sean Swanson says Claude Code moved from a 1 hour prompt-cache TTL to 5 minutes around early March, which would force long sessions to re-send far more context.

That is not just forum archaeology. In The Register's reporting, Anthropic said it reduced the TTL from one hour to five minutes for many requests last month, but argued that the change should not have increased costs. Users in the GitHub and HN threads plainly think otherwise.

The headline complaint in issue #45756 is brutal: 1.5 hours to exhaust a Pro Max 5x reset window under moderate use, with the reporter estimating 8.7 million effective tokens per hour. The same report suspects cache_read tokens may be counted at full rate instead of the usual discounted rate.

Hidden context

Pro Max 5x quota exhausted in 1.5 hours despite moderate usage

730 upvotes · 644 comments

The most useful explanation from practitioners is not just cache TTL. It is cache TTL plus session bloat.

According to the HN thread summary, commenters repeatedly pointed to installed skills, MCP servers, and other hidden session context as token overhead that users do not see in the main prompt. The fresh follow-up makes the same point more bluntly: the 5 minute TTL compounds the cost of every extra bit of background context because idle time stops being cheap.

That framing also explains why complaints sound inconsistent across users. Someone running a minimal setup can have a very different burn profile from someone whose Claude Code instance is carrying a pile of skills, monitors, MCP tools, and long-lived repo context.

2.1.105 patch

Anthropic's April 13 patch is Christmas come early for terminal-tooling nerds. The official v2.1.105 release notes are mostly a cleanup sprint, but the fixes land right on the pain users were posting about all weekend.

The release's most relevant changes:

- stalled API streams now abort after 5 minutes of no data and retry non-streaming instead of hanging indefinitely

EnterWorktreegets apathparameter for existing worktrees- PreCompact hooks can now block compaction

- plugin manifests can declare background monitors that arm automatically

- WebFetch now strips

<style>and<script>so CSS-heavy pages do not blow the content budget - MCP sessions fail fast on malformed stdio output, and MCP tools are less likely to be missing on the first turn of headless sessions

/proactiveis now an alias for/loop

ClaudeCodeLog's deeper diff thread also spotted new config and env surface around monitors, memories, memory_paths, away summaries, and session resume, plus stricter system-prompt routing for config changes and subagent verification.

Regression reports and outages

[MODEL] Claude Code is unusable for complex engineering tasks with the Feb updates

1.4k upvotes · 754 comments

The quota blowup landed on top of a much broader reliability argument. In GitHub issue #42796, a user claimed Claude Code had become unusable for complex engineering after February updates, and backed that with an analysis of 6,852 session logs, 17,871 thinking blocks, and 234,760 tool calls. The report says reasoning depth fell by roughly two thirds and the tool shifted from research-first to edit-first behavior.

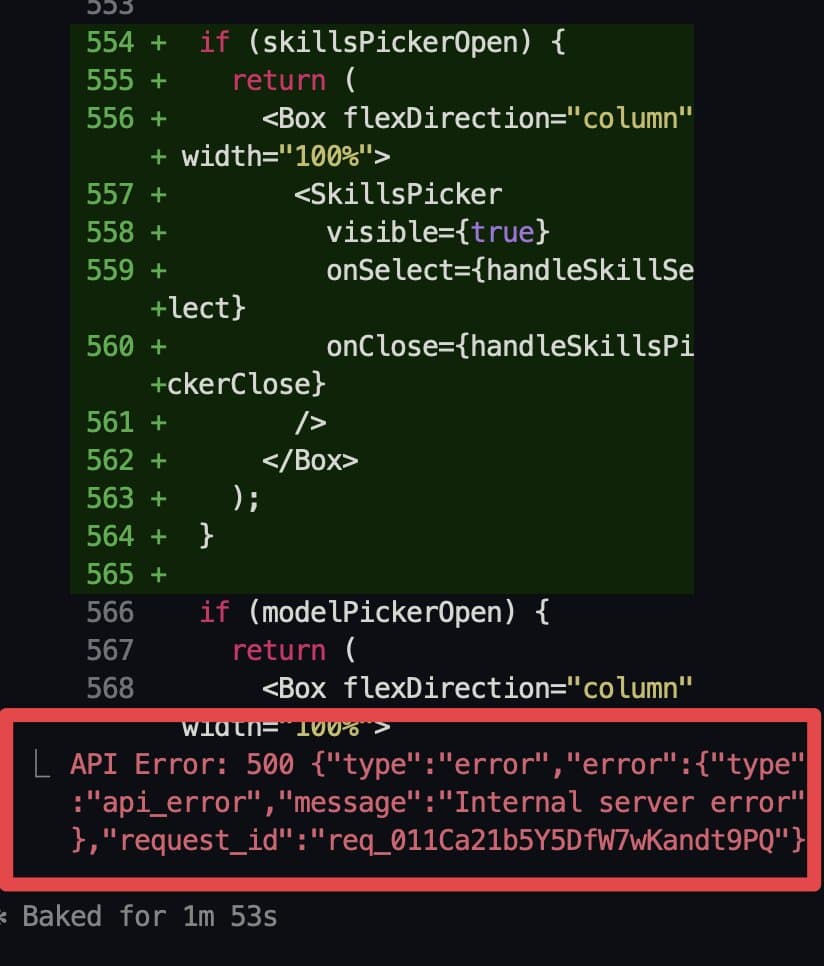

Sunday's service wobble made that backdrop feel even worse. bridgemindai's post showed a raw 500 internal server error in Claude Code, then a follow-up six minutes later said the service was back. Separate reactions like skeptrune's daily-limit screenshot and zeeg's rendering complaint show how quickly UI bugs, outages, and quota burn are being folded into the same user narrative.

Fullscreen renderer

The last useful thread here is the renderer work. trq212's post points users to CLAUDE_CODE_NO_FLICKER=1, and the Fullscreen rendering docs describe it as an opt-in research preview for Claude Code v2.1.89 and later.

The implementation is concrete: Claude Code draws to the terminal's alternate screen buffer, keeps only visible messages in the render tree, adds mouse support, and keeps memory usage flat even in long conversations. That pairs neatly with the 2.1.105 fix list, which includes blank-screen, wrapped-input, whitespace, and small-terminal regressions. Anthropic is not only patching quota complaints, it is still actively rebuilding the terminal itself.