Anthropic limits Claude 5-hour sessions as users report 529 overloads

Anthropic confirmed new peak-time metering that burns through 5-hour Claude sessions faster, and multiple power users posted 529 overloaded errors and early exhaustion. If you rely on Max plans for coding, watch for session limits and consider moving daily work to Codex.

TL;DR

- Anthropic has confirmed a peak-time metering change: posts citing the company say Free, Pro, and Max usage now burns faster during weekday windows, so the same 5-hour session can exhaust much sooner metering change peak-hours post.

- Power users are pairing that change with reliability complaints. One Claude Code user posted repeated "529 overloaded" failures mid-task at 7am and said outages had been "all day" the prior day 529 error report.

- The strongest practitioner reports say the two issues compound: the same Max subscriber who hit overloads also said Anthropic "lowered them dramatically out of nowhere" and canceled after hitting a 5-hour limit in under an hour cancellation post.

- Community analysis suggests the pain is uneven. A Reddit power user said the worst impact lands in the weekday 5am-11am PT window and that long sessions, MCP overhead, and large accumulated context can accelerate burn even further Reddit analysis.

What changed in Claude's metering?

Anthropic appears to have changed how Claude usage is charged during weekday peak windows rather than simply lowering a flat quota. Wes Roth wrote that Anthropic had "confirmed" new metering for Free, Pro, and Max, with users burning through their 5-hour session limits "significantly faster during peak times" metering change. A separate post framed the same window as weekdays from 5am-11am PT / 1pm-7pm GMT peak-hours post.

That matters operationally because the visible limit presented to users is still a 5-hour bucket, but the effective work you can do inside that bucket changes with time of day. The Reddit writeup from a Max subscriber says the problem is highly timezone-dependent: someone working a PST 9-to-5 schedule is inside "the absolute worst window," while that author's late-night EST workflow avoided most of it Reddit analysis. The same post adds a second implementation detail engineers will recognize from agentic coding sessions: by turn 30, a "simple" prompt can become expensive once conversation history, system prompt, tool definitions, loaded files, and extended thinking tokens accumulate Reddit analysis.

What failures are users seeing in Claude Code?

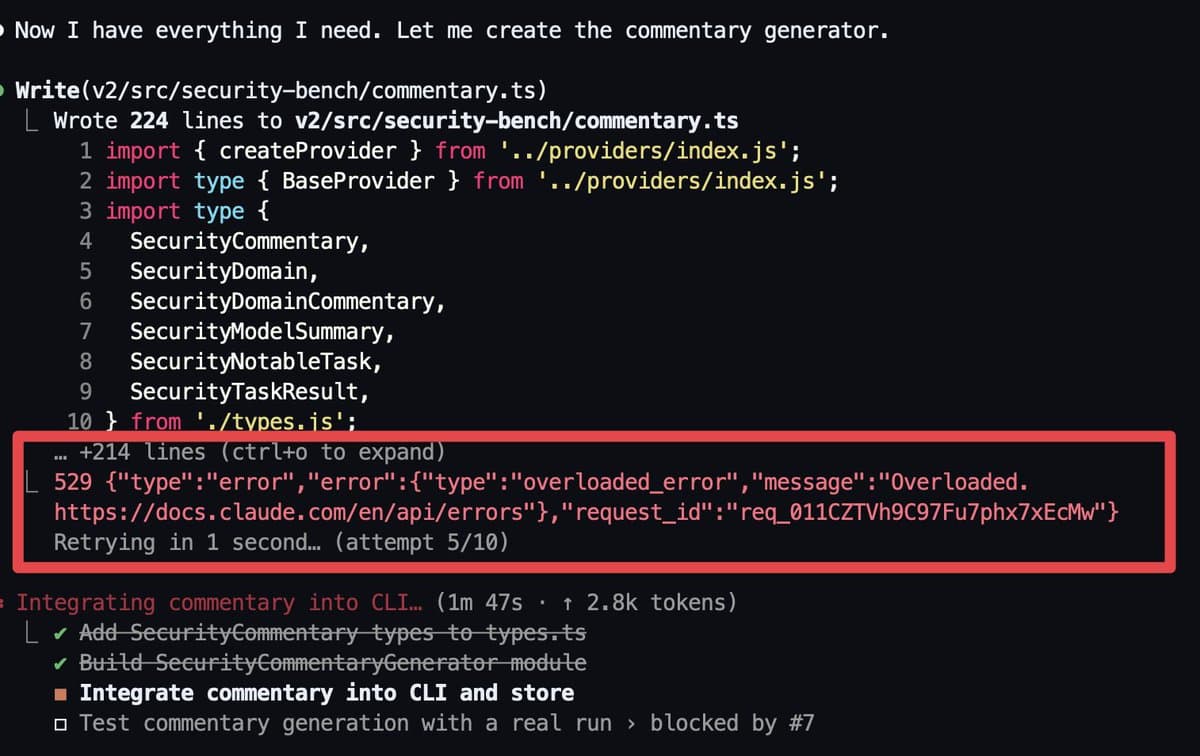

The clearest failure mode in the evidence is backend overload during active coding runs. One Max-plan user posted a Claude Code terminal throwing an overloaded_error with retry "attempt 5/10" while the tool was still "integrating commentary into CLI" 529 error report [img:2|terminal overload]. In the accompanying post, they said this had become a "daily occurrence" and argued they should not be seeing overloads "at 7am."

That same user then connected availability and metering in a follow-up cancellation post. They said rate limits were "lowered dramatically out of nowhere," that they were seeing "529 overloaded errors daily," and that a 5-hour limit was gone "in under an hour" on the $200 Max plan cancellation post. This is the strongest evidence in the set because it ties a concrete runtime error to a billing-tier workflow and an observed session budget collapse.

A repost from another power user points in the same direction, claiming two $200 plans were now hitting usage limits "within a few hours" second power user. That does not prove a platform-wide outage, but it does show multiple high-usage coding customers describing the same class of failure on the same day.

How are developers adapting their workflows?

The immediate workflow change in the evidence is fallback to OpenAI Codex with GPT-5.4, mainly on reliability and usable limits rather than model quality. After canceling Claude Max, the same BridgeMind user said they were switching because Codex had "better rate limits" and was "actually available when I need it" cancellation post. In a later screenshot, they showed "5 Codex terminals" and only one Claude Code terminal, saying they had been able to run seven Claude agents two weeks earlier but could now "barely keep one running" workflow screenshot.

A later usage screenshot sharpens that comparison with a measurable delta: after running Codex with GPT-5.4 High "all morning," the user said 87% of their 5-hour limit was still left, contrasting that with Claude Code where they would be at 0% by then Codex usage [img:9|usage screenshot]. Another user made a lower-cost version of the same calculation, saying Claude Pro plus ChatGPT Plus felt more workable than a single $200 Claude subscription because "limits are much lower for Claude Pro than ChatGPT Plus" price-limit comparison.

The counterpoint is that not every Max user is hard-stopped. The Reddit post argues that session design now matters much more: long-lived threads, heavy MCP setups, and high-effort reasoning settings can make the same nominal limit disappear faster, especially inside the peak window Reddit analysis. That does not undercut the overload reports; it suggests engineers are dealing with both supply-side constraints and workload-shape effects at the same time.