CopilotKit adds useAgentContext and useFrontendTool for UI-aware agents

CopilotKit shipped hooks that let agents inspect app state and call frontend actions, then paired them with Shadify for ShadCN-based UI composition. It gives embedded agents a cleaner path from chat to in-app behavior.

TL;DR

- CopilotKit says its new hooks turn embedded agents from chat-only assistants into app-aware ones: the launch thread introduces

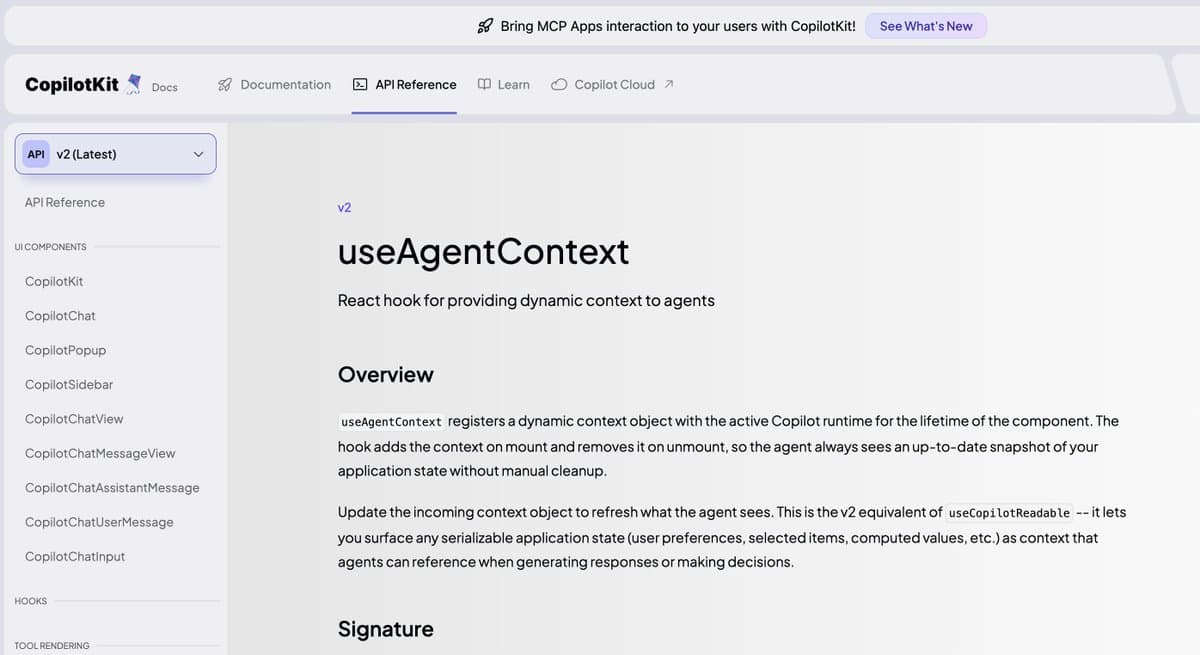

useAgentContextso an agent can "see" UI state anduseFrontendToolso it can "act" inside the app. - The docs links in the follow-up post show both hooks are already documented, which makes this a ship-ready SDK addition rather than a teaser.

- CopilotKit is pairing those hooks with the Shadify post, which pitches "Generative UI built on ShadCN" so a LangChain agent can compose interface components from a text description.

- In practice, the combination points to a cleaner agent pattern: inspect frontend context, trigger frontend actions, then stream UI back from app components, as Mike Ryan's post describes.

What exactly shipped?

CopilotKit's launch thread frames the release around a common limitation in agent SDKs: "Most Agents can only chat" and "can't read your UI or do anything in your app." The two new hooks split that problem in half. useAgentContext, documented in the hook reference, is presented as the visibility layer; useFrontendTool, documented in its reference, is the action layer.

That split matters for implementation because it gives developers a cleaner boundary between observation and invocation. Rather than forcing an agent to infer app state from text or bounce everything through backend tools, CopilotKit is explicitly exposing frontend context and frontend actions as separate primitives, with the docs post claiming both are "simple and ready in minutes." The attached demo video UI-aware agent demo shows the intended workflow moving from code to a browser app where UI is assembled interactively.

How does Shadify fit into the developer workflow?

CopilotKit is not shipping the hooks in isolation. The Shadify announcement describes "Generative UI built on ShadCN" where developers "describe a UI" and let a LangChain agent compose from ShadCN components. That turns the new hooks into part of a broader loop: the agent can inspect the current interface, call frontend-side capabilities, and then generate or update visible UI from an existing component system.

The supporting repost from Ata's demo share repeats the same core behavior, which suggests Shadify is the showcase implementation for these primitives rather than a separate product line. The extra context from Mike Ryan's post is useful because it describes the outcome more concretely: an agent can "stream back a user interface from your components." For engineers building in-app copilots, that is the technical shift here: CopilotKit is moving from chat orchestration toward UI-aware, component-level agent interactions inside the frontend.