DistCA claims 1.35x long-context training gains with disaggregated core attention

Researchers released DistCA, a training system that offloads stateless core attention to dedicated servers and reports up to 1.35x throughput gains on long-context workloads. Evaluate it for very long-sequence training where attention imbalance strands GPUs and creates pipeline stalls.

TL;DR

- HAO AI Lab introduced DistCA, a long-context training system that treats core attention as a separate service and says the stateless

softmax(QKᵀ)Vstep can be offloaded to dedicated attention servers DistCA thread. - The pitch is a systems one: at long sequence lengths, attention grows quadratically while most other work is closer to linear, so equal token splits can still leave some GPUs stuck on much heavier batches and create cluster-wide idle time imbalance thread.

- In the team’s reported results, DistCA delivers “almost 2x” speedup versus Megatron in its thread and up to 1.35x over state-of-the-art methods in the linked paper summary, with experiments described on 512 H200 GPUs and contexts up to 512K tokens reported gains.

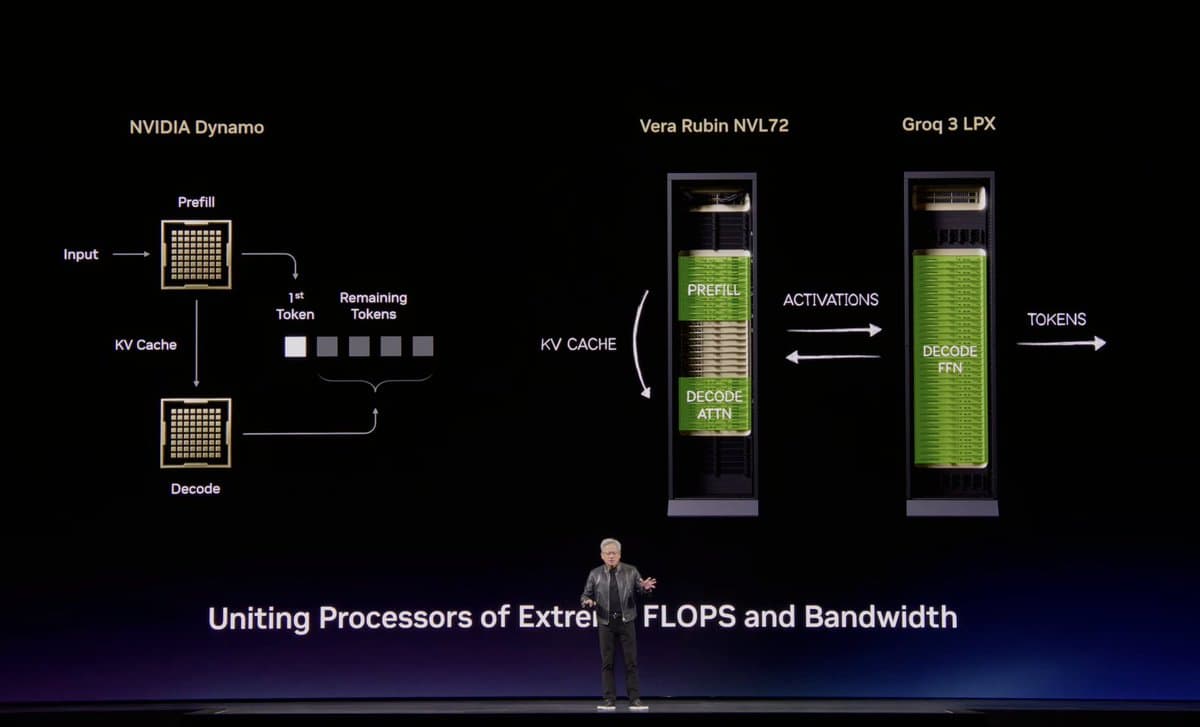

- The broader theme is disaggregation: alongside a separate FlashSampling thread on fused decode-time sampling, DistCA argues that moving expensive substeps out of the standard pipeline can turn a bottlenecked stage into a schedulable systems problem.

What changed in the training stack?

DistCA’s core claim is that long-context training breaks because the expensive part of attention does not scale like the rest of the layer. HAO AI Lab’s imbalance thread says core attention is O(n²) while “everything else is roughly O(n),” so “same tokens per GPU” no longer means same work per GPU when document lengths vary.

The system response is Core Attention Disaggregation. In the team’s DistCA thread, the stateless core attention step is pulled out of the layer, split into token-level tasks, and sent to dedicated attention servers. The linked DistCA repo describes this as dynamic rebalancing for the softmax(QKᵀ)V path, with examples and benchmarking scripts for multi-node long-context runs.

What do the reported gains actually mean?

The reported upside is mostly about reducing stragglers and pipeline bubbles rather than changing the model itself. The paper summary says DistCA uses ping-pong execution to overlap communication with compute and in-place execution on attention servers to cut memory use, aiming to keep utilization balanced as context length rises.

The headline number is up to 1.35x higher training throughput over prior methods, with the thread separately claiming “almost 2x speedup compared to Megatron” reported gains. The paper summary ties those results to a 512-H200 setup at context lengths up to 512K tokens, which makes this most relevant for teams already hitting long-sequence imbalance rather than general-purpose short-context training. As a systems pattern, it rhymes with Cedric Chee’s FlashSampling thread description of FlashSampling: fuse or disaggregate the expensive step so the bandwidth-bound bottleneck stops dominating runtime.