Google introduces Gemini Intelligence on Android with browser use, AppFunctions, and Rambler

Google unveiled Gemini Intelligence at the Android Show with cross-app task automation, Gemini in Chrome, Rambler voice cleanup, custom widgets, and AppFunctions. The rollout moves Gemini into core Android workflows on Pixel and Galaxy devices this summer.

TL;DR

- Google is branding a new Android layer as Gemini Intelligence, with rollout starting on Samsung Galaxy and Google Pixel devices later this summer, according to Google's feature list and Google's launch post.

- The launch centers on five concrete behaviors: cross-app task automation, one-tap form fill via Gemini Personal Intelligence, Rambler voice cleanup, custom generative widgets, and Chrome auto-browse, as testingcatalog's Android Show roundup and Google's thread both summarize.

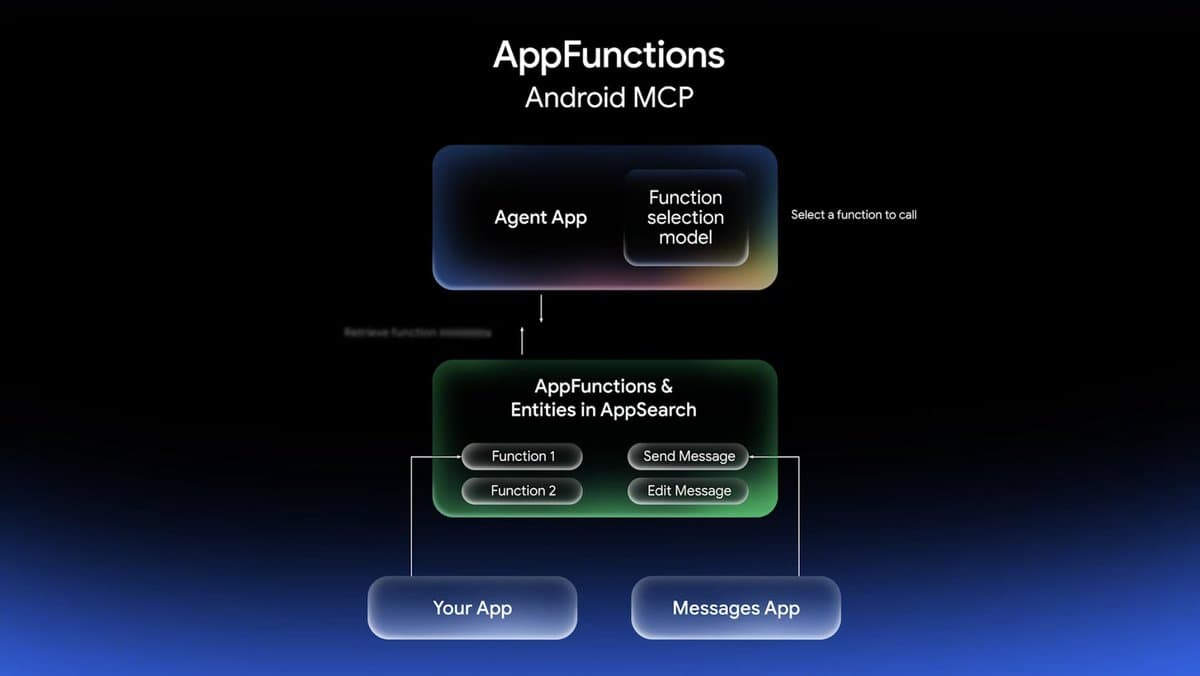

- testingcatalog's AppFunctions post added one developer-facing reveal the official consumer posts barely surface: AppFunctions, which it described as MCP-like plumbing for Android apps.

- Google is also pairing the phone rollout with broader interface experiments, including browser use on mobile in testingcatalog's browser-use post and a Gemini-powered pointer demo in the DeepMind pointer post, linked by koltregaskes's pointer link.

You can read Google's main Gemini Intelligence announcement, the separate Chrome on Android post, and the AI Pointer demo that shows where this UI work is headed. The interesting bit is how many of the reveals are not standalone app features at all, they are attempts to move Gemini into system surfaces like autofill, widgets, and cross-app actions, as Google's thread and testingcatalog's AppFunctions post make explicit.

What shipped

Google's public framing is straightforward: Gemini Intelligence is "the best of Gemini" on its most advanced Android devices, with features rolling out in waves later this summer to Pixel and Galaxy phones.

The announced behaviors break down cleanly:

- Cross-app automation: Gemini can chain actions across apps, with Google's example pulling a syllabus from Gmail and adding the required books to a cart, per Google's feature list.

- Gemini Personal Intelligence: one-tap form filling uses personal context, according to Google's thread.

- Rambler: spoken thoughts get cleaned into polished text, with filler words removed, per Google's thread and testingcatalog's Rambler post.

- Custom widgets: users can build widgets in natural language to keep specific information on screen, according to Google's thread and testingcatalog's widgets post.

- Broader Android redesign: testingcatalog's roundup also ties the release to a new design pass during the Android Show.

Google's official post is here: Gemini Intelligence on Android.

Chrome auto-browse

The Chrome piece is its own product surface, not just a footnote in the Android bundle. testingcatalog's Android Show roundup called it "Gemini in Chrome gets Browser Use," while testingcatalog's follow-up said browser use is coming to mobile too.

The linked Chrome post describes it as auto browse on Chrome for Android, with the flow reduced to prompt, approve, and let Chrome handle the chore in the background: Bringing Chrome AI to Android. That makes the mobile browser another agent surface alongside the OS-level automation demos.

A few commentary posts gave concrete examples of the intended workflow. The Rundown AI's summary described snapping a hotel brochure photo and jumping into an Expedia booking flow, while kimmonismus's post highlighted browsing and autofill as the notable behaviors.

Rambler and Personal Intelligence

Rambler looks like Google pushing LLM cleanup directly into input, not just chat. testingcatalog's post described it as a new voice input that turns a brain dump into clean sentences, and Google's thread says it removes the "umms" and "errs" while turning speech into polished text.

Personal Intelligence is the other half of that stack. In a separate GeminiApp thread, GeminiApp said the system can connect data from Gmail, Google Photos, Google Search, and YouTube history to assemble travel details and itinerary suggestions, while a follow-up demo showed it generating future-trip ideas from past reservations and preferences.

Put together, Google is treating personalization as runtime context for mobile actions:

- connected app data feeds form fill and itinerary assembly, per GeminiApp's thread

- speech cleanup feeds polished text generation, per Google's thread

- both are exposed as system utilities rather than standalone chat prompts, according to Google's launch thread

Widgets and interface surfaces

The widget story is more interesting than the name suggests. testingcatalog's post said Android will get GenUI widgets so users can create widgets for the exact information they want, and Google's thread describes them as custom widgets built to keep important information front and center.

That shifts Gemini from answering requests to generating persistent interface elements. Commentary around the launch kept landing on the same pattern: The Rundown AI called it "vibe-code your own widgets," while WesRoth's graphic grouped Create My Widget with autofill, Rambler, and busy-work automation as first-class product surfaces.

Google is also testing other interface primitives around that idea. The AI Pointer demo, linked by koltregaskes's pointer post, shows Gemini attached to the pointer itself, and kimmonismus's summary described the interaction as point, speak, and act without opening a separate chat window.

AppFunctions

AppFunctions is the most developer-relevant reveal in the evidence set because it hints at how Gemini gets from demo videos to real cross-app execution. testingcatalog's post described it as working "like MCPs for Android apps," which is a useful shorthand for app-exposed actions that an agent layer can call.

Google did not foreground AppFunctions in the consumer-facing tweets the way it foregrounded Rambler or widgets, but it fits the rest of the launch unusually well:

- Gemini Intelligence promises multi-step actions across apps, per Google's feature list

- Chrome is getting an approval-based auto-browse flow, according to GeminiApp's Chrome retweet

- AppFunctions suggests the app-side contract needed to make those actions less demo-only and more platform-native, per testingcatalog's AppFunctions post

If that framing holds, the Android Show was not just a Gemini feature dump. It was a quiet platform story about giving Android apps agent-callable surfaces.