Perceptron releases Mk1 with 2 FPS video reasoning, 32K context, and $0.15 per 1M input

Perceptron launched Mk1, a multimodal model for video and embodied reasoning with native 2 FPS video, 32K context, and structured spatial outputs. OpenRouter access and the low input price make it usable for deployment, not just demos.

TL;DR

- Perceptron shipped Mk1 as a multimodal model for video and embodied reasoning, and perceptroninc's launch post paired the launch with API availability and a price point of $0.15 per 1M input tokens and $1.50 per 1M output tokens.

- According to perceptroninc's video processing post, Mk1 handles native video at up to 2 FPS across a 32K context window, while OpenRouter's listing says the model exposes structured spatial outputs such as points, boxes, polygons, and clips.

- In perceptroninc's embodied reasoning post, the company said Mk1 can keep object identity through occlusion, reason across multiple camera streams, and read task outcomes directly from video.

- perceptroninc's partner list framed the early use cases around sports clipping, teleop data curation, manufacturing QC, satellite and drone imagery, and smart glasses assistants.

- AkshatS07's thread and AkshatS07's Modal note add the most useful systems detail: native video at 2 FPS increases prompt length, while structured outputs and hybrid thinking increase decode length.

You can read the official writeup, try the demo, and inspect the OpenRouter model page. The interesting bits are not just the benchmark claims. The output format includes first class geometry primitives, OpenRouter's listing calls out hybrid reasoning on top of video, and AkshatS07's Modal note explains why the serving stack had to absorb longer prompts and longer decodes at the same time.

Native video

The launch pitch is straightforward: Mk1 is meant to work on video as video, not as a bag of sparsely sampled frames. Perceptron said the model processes native video up to 2 FPS over a 32K context window, and can return structured time codes when asked for a moment inside a long stream.

AkshatS07 added one more concrete capability in AkshatS07's capability note: native video support, temporal grounding, and multimodal in-context learning were the changes that pushed its embodied reasoning results. That gives the launch a slightly different shape from generic VLM releases, more sequence model than screenshot model.

Spatial outputs

Perceptron described four embodied reasoning behaviors in one place:

- pixel precise pointing

- object identity through occlusion

- joint reasoning across multiple camera streams

- reading task outcomes directly from video

The image side is similarly concrete in perceptroninc's image capability post, which lists:

- pointing

- counting into the hundreds in dense scenes

- reading analog gauges and clocks

- structured document extraction with layout preserved

That structure matters because the model is not being framed as a chatbot with vision attached. OpenRouter's listing says the outputs can be points, boxes, polygons, and clips, which is a much better fit for robotics, inspection, and retrieval workflows than freeform text alone.

Benchmarks and price

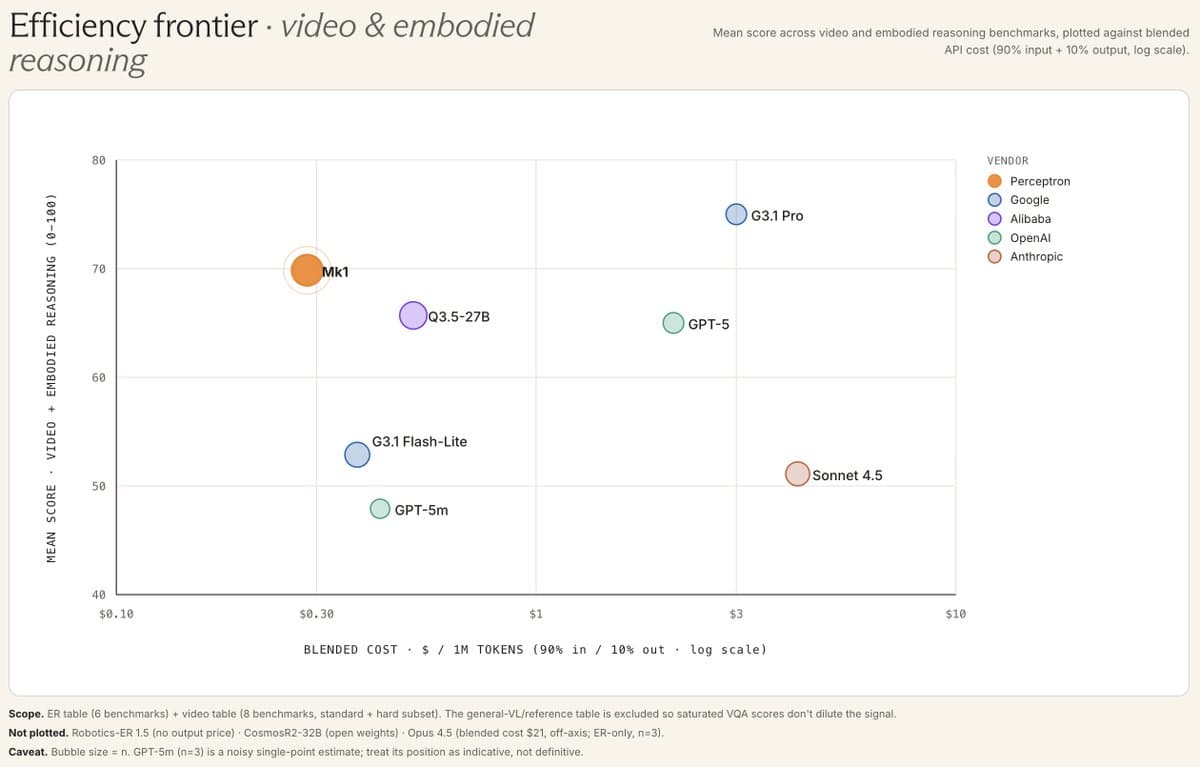

Perceptron is claiming parity with Gemini, GPT, Claude, and Qwen on video reasoning at lower cost, while ArmenAgha narrowed the comparison to Gemini-Flash, Gemini-ER, and larger Qwen models on perceptive and physical AI tasks. The public evidence here is still company supplied. The useful engineering fact is the price, not the frontier chest thumping.

Mk1 launched at $0.15 per 1M input tokens and $1.50 per 1M output tokens, per perceptroninc's pricing post and OpenRouter's pricing post. That is cheap enough to make long video pipelines sound deployable instead of purely demo shaped.

Access and early uses

The initial distribution is simple: API access is live, the public demo is live, and OpenRouter added the model the same day via the OpenRouter model page. ArmenAgha also said in ArmenAgha's weights note that Perceptron plans a small partner program for direct model access.

Perceptron listed five partner workflows:

- auto clipping highlights from live sports

- curating teleop episodes into training data without human annotators

- running multimodal QC agents on manufacturing lines

- analyzing satellite and drone imagery for utilities and insurance

- powering wearable assistants on smart glasses

That list is a nice tell. The company is going after physical world and operational video workloads first, not generic consumer multimodality.

Infrastructure

The most technical detail in the evidence pool came after launch. AkshatS07 said Perceptron's internal lesson was that existing text stacks do not scale well to multimodal, and described Mk1 as a scaling hypothesis focused on video and embodied reasoning.

The serving note is even better. All inference runs on Modal, and AkshatS07's Modal note says Mk1 changed serving requirements in three ways:

- native video at 2 FPS increases prompt length

- structured outputs increase decode length

- hybrid thinking also increases decode length

AkshatS07 said those constraints are why the team leaned on GPU snapshotting, serverless GPU infrastructure, and autoscaling. For anyone trying to map where video-native models get expensive, that is the new information in this launch, not just another benchmark bar chart.