Epoch AI reports GPT-5.4 Pro solved one FrontierMath Open Problems conjecture

Epoch AI says GPT-5.4 Pro elicited a publishable solution to one 2019 conjecture in its FrontierMath Open Problems set, with a formal writeup planned. Treat it as an early milestone worth reproducing, not blanket evidence that frontier models can already automate math research.

TL;DR

- Epoch AI says GPT-5.4 Pro helped solve one problem in FrontierMath: Open Problems, specifically a 2019 conjecture contributed by Will Brian that he and Paul Larson had been unable to settle in several later attempts, according to Epoch's announcement and the problem context.

- The claim is stronger than a one-off chat screenshot: Epoch says Brian confirmed the construction, plans a publication writeup, and expects possible follow-on work from the AI-generated ideas, as described in the publication note and the problem page thread.

- Epoch also says it reproduced the result in its own evaluation scaffold, where Gemini 3.1 Pro, GPT-5.4 xhigh, and Opus 4.6 max could solve the problem "at least some of the time," per the replication note and the thread update.

- The broader picture is still narrow: Terence Tao says current systems have solved about 50 Erdős problems but only hit roughly a 1-2% success rate on a wider sweep, making this an early milestone rather than evidence that math research is automated, according to the Tao clip and the summary quote.

What exactly was solved?

Epoch says the solved item is a "Moderately Interesting" FrontierMath Open Problems entry: a conjecture from a 2019 paper by Will Brian and Paul Larson. In the follow-up post, Epoch says Brian had tried and failed to solve it both at the time of writing and in later attempts, which makes the claim more meaningful than a benchmark win on a synthetic task.

The linked problem page identifies the problem as a Ramsey-style hypergraph construction question and says GPT-5.4 Pro produced a solution that Brian confirmed. Epoch's publication update says Brian now plans to write up the result for publication, "possibly including follow-on work" generated by the model's ideas.

A context post quoting Brian's statement says the AI approach "works out perfectly" and "eliminates an inefficiency" in the prior lower-bound construction, which is the clearest public hint so far about what changed technically in the proof idea Brian quote screenshot.

How reproducible is the result?

Epoch is not presenting this as a single lucky prompt. In the main replication claim, it says Kevin Barreto and Liam Price first elicited a solution from GPT-5.4 Pro and that a third user did so shortly after, suggesting the result can be recovered by multiple operators rather than one irreproducible conversation.

More importantly for engineers building evals, Epoch says it reproduced the solve in its own scaffold. Its scaffold result says Gemini 3.1 Pro, GPT-5.4 xhigh, and Opus 4.6 max are all able to solve the problem "at least some of the time," which points to a harnessable capability rather than a model-specific anecdote.

Epoch also says the problem-page post includes a full chat transcript for GPT-5.4 Pro's original solution plus other models' solutions from the harness. That makes this unusually inspectable for a frontier-model math claim, even if the underlying success probabilities, prompt sensitivity, and verification costs are still not quantified in the thread.

What should engineers take away from this?

The practical takeaway is that frontier models look increasingly useful as research assistants on hard formal problems, but only under heavy elicitation and verification. In the Tao discussion, Terence Tao describes current models as a "trustworthy coworker" for applying standard techniques, while also saying their broader hit rate on hard math remains around 1-2%.

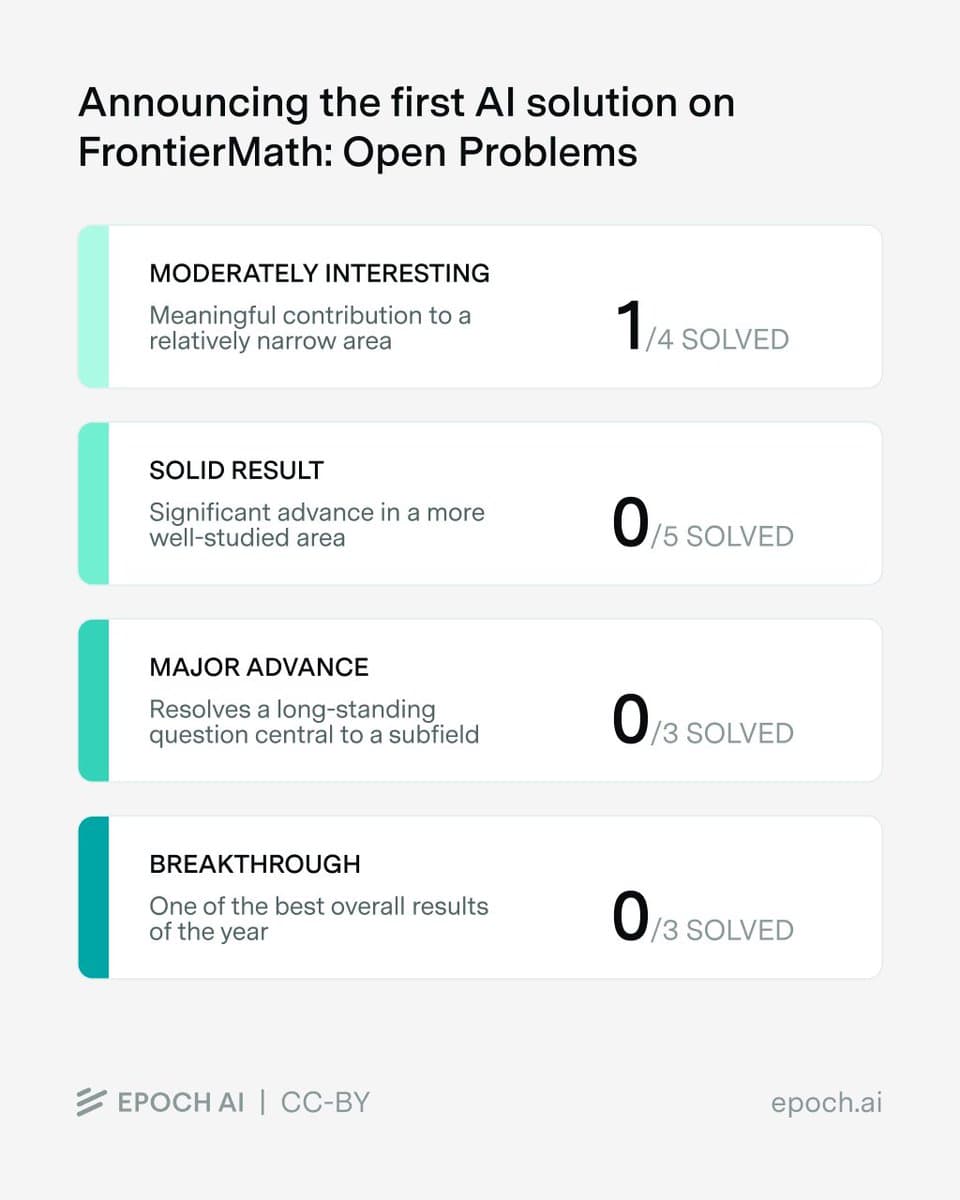

That framing matters here. Epoch's own benchmark overview says only one of the four "Moderately Interesting" problems is solved so far, with every higher-importance tier still at zero. A reaction screenshot makes that breakdown explicit as 1/4 solved in the lowest tier and 0/11 in the more ambitious tiers scoreboard image.

So this story is best read as a reproducible milestone for evaluation design and assisted theorem discovery. It is not yet evidence that a lab can hand frontier models an open-problem queue and expect reliable autonomous research throughput.