AI FILMS Studio launches Happy Horse 1.0 with 720p/1080p text-to-video and image-to-video

AI FILMS Studio added Happy Horse 1.0 with text-to-video and image-to-video, 720p/1080p output, five aspect ratios, and 3-15 second clips. Comparison posts immediately framed it against Seedance 2.0, but early creator signal stayed mixed on whether its motion quality holds up on harder shots.

TL;DR

- AI FILMS Studio added Happy Horse 1.0 with text-to-video and image-to-video, 720p and 1080p output, five text-to-video aspect ratios, and 3 to 15 second clips, according to zaesarius's launch thread and the linked AI FILMS tutorial.

- The AI FILMS workflow leans into film-language prompting, because zaesarius's walkthrough says the model responds to instructions like dolly shots, pans, cranes, and rack focus.

- Pricing on AI FILMS scales by duration and resolution, with zaesarius's launch thread pegging a 3 second 720p clip at 420 credits and a 15 second 1080p clip at 4,200 credits.

- Early comparison posts immediately turned this into a Seedance 2.0 fight, but ozansihay's side-by-side test and techhalla's reaction thread both argued Happy Horse still looks weaker on harder motion shots.

- The rollout was broader than one studio integration, because the Leonardo repost and AIwithSynthia's OpenArt post show Happy Horse landing across multiple creation platforms while fal's API announcement opened developer access.

You can browse the AI FILMS Studio tutorial, check the fal API rollout, and watch the argument split fast between a Seedance comparison clip and a post framing the tools as different strengths.

AI FILMS Studio workflow

The AI FILMS release is pretty straightforward. Text-to-video and image-to-video are both live, both support 720p and 1080p, and both bill at the same per-second rate, according to zaesarius's launch thread and the linked product tutorial.

The concrete controls are where the launch gets useful:

- Text-to-video aspect ratios: 16:9, 9:16, 1:1, 4:3, 3:4, per zaesarius's feature list

- Clip length: 3 to 15 seconds, per the launch thread

- Prompt style: cinematic camera verbs like dolly, pan, crane, and rack focus, per zaesarius's prompting notes

- Style anchors: terms like "film still" and "photorealistic," per the walkthrough

- Image-to-video behavior: preserves the source composition and subject, with output ratio matched to the input image, per zaesarius's notes

- Nodes Graph support: AI FILMS says the model also appears inside its node editor in the official tutorial

Seedance comparisons

The first wave of reactions did not treat Happy Horse as a blank-slate launch. They treated it as a direct Seedance 2.0 contender.

That produced two different reads. AIwithSynthia's comparison post framed the split as style versus motion, while ozansihay's side-by-side test said Seedance looked clearly stronger on a fast cat-chasing-mouse shot through a crowded Istanbul street.

The harsher take came from techhalla's reaction thread, which dismissed the idea that Happy Horse belongs in the same league as Seedance. For a low-volume launch, that is the real signal: creators immediately found the benchmark framing, then started testing whether the motion actually cashes it out.

Creator-facing prompt language

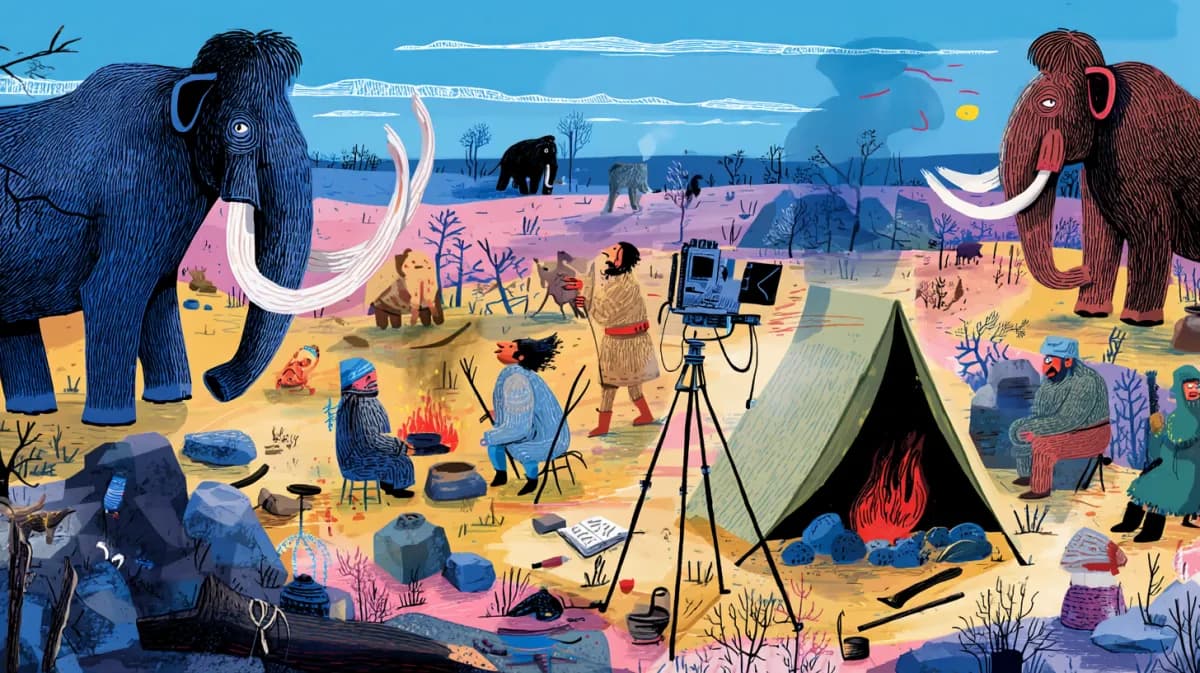

The creative pitch around Happy Horse is less about abstract model quality and more about how specifically you can direct a shot. the prompt example repost pushes a long cyberpunk alley prompt, while zaesarius's tutorial thread emphasizes camera movement, lighting, and mood instead of generic scene description.

That lines up with the AI FILMS tutorial's framing of the model as a cinematic tool rather than a novelty generator. The interesting bit is not that it makes video. It is that the launch materials keep steering users toward shot grammar, seed control, and short test clips inside the same workspace AI FILMS tutorial.

Platform spread

Happy Horse did not stay boxed inside AI FILMS Studio for long. the Leonardo repost says Leonardo made it live on April 29, while the OpenArt repost and AIwithSynthia's OpenArt post pitch it as the top-ranked text-to-video model on Artificial Analysis.

The platform claims are not identical. AI FILMS is selling camera control and workflow nodes, OpenArt is selling benchmark rank and 15 second 1080p clips with synced audio, and fal's API announcement says developers can hit four endpoints, text-to-video, image-to-video, reference-to-video, and video-edit, on day one.

That wider rollout matters because the product story is already splitting by surface. Inside AI FILMS, Happy Horse looks like a filmmaker tool. Across OpenArt, Leonardo, and fal, it looks more like a fast-moving model slug that every video platform wants in the picker.