OpenAI resets Codex usage limits after 3 million weekly users

OpenAI said Codex reached 3 million weekly users and reset usage limits, with another reset planned for each additional million users up to 10 million. ChatGPT-sign-in Codex will also retire the gpt-5.2 and gpt-5.1-era lineup on April 14, so teams should watch for model-default changes.

TL;DR

- OpenAI said Codex hit 3 million weekly users on April 7, up from 2 million less than a month earlier, and Thomas Sottiaux's announcement said the company is resetting usage limits to mark the milestone.

- Sam Altman's follow-up said OpenAI will repeat that reset at each additional 1 million users until Codex reaches 10 million weekly users.

- The same day, OpenAI Devs said ChatGPT-sign-in Codex will retire six older models on April 14, while the linked GitHub discussion says users who still need them can keep using them with their own API key.

- According to OpenAI's deprecation note and the Codex changelog, the surviving ChatGPT-sign-in lineup is gpt-5.4, gpt-5.4-mini, gpt-5.3-codex, gpt-5.2, plus gpt-5.3-codex-spark for Pro.

- The celebration lands in the middle of visible capacity pressure: one paying user's comparison described Codex as able to work for hours without interruption, while the OpenAI community rate-limit thread collected complaints that existing limits were too restrictive.

Codex now has an official growth number, an official quota reset, and an official model-picker cleanup all on the same day. You can read OpenAI's February post on scaling past rate limits, check the new April 7 Codex changelog entry, and the pricing picture got another tweak last week with pay-as-you-go Codex seats for Business and Enterprise. The product page still describes Codex as a cloud agent that can write features, answer codebase questions, fix bugs, and propose PRs in parallel in the ChatGPT app in Introducing Codex.

3 million weekly users, and a quota reset tied to growth

The hard number here is new. Sottiaux said Codex reached 3 million weekly users, up from 2 million a little under a month ago.

The quota policy is also unusually explicit. Altman said OpenAI is resetting usage limits now, then repeating that reset every time Codex adds another million weekly users until it reaches 10 million.

That turns a one-off celebration into a public growth ladder. It also gives engineers a concrete signal that Codex demand is growing faster than OpenAI wants static limits to imply.

The ChatGPT-sign-in model list is shrinking on April 14

The retiring ChatGPT-sign-in models are:

- gpt-5.2-codex

- gpt-5.1-codex-mini

- gpt-5.1-codex-max

- gpt-5.1-codex

- gpt-5.1

- gpt-5

The replacement lineup after April 14 is:

- gpt-5.4

- gpt-5.4-mini

- gpt-5.3-codex

- gpt-5.3-codex-spark, Pro only

- gpt-5.2

The tweet thread from OpenAI Devs matched the GitHub discussion, and the Codex changelog adds one extra operational detail: starting April 7, those retiring models no longer appear in the model picker for ChatGPT-sign-in users, even before the full April 14 removal.

If a team still needs another API-supported model, both the tweet thread and the changelog point to the same escape hatch: sign in to Codex with an API key instead of a ChatGPT account.

OpenAI has been moving Codex toward token-priced access

The reset makes more sense next to OpenAI's own infrastructure and pricing changes. In Beyond rate limits, OpenAI said Codex and Sora both saw usage push beyond early expectations, and described a real-time access engine that counts usage and lets users keep going by purchasing credits after they exceed rate limits.

That framing already showed up in product packaging. On April 2, OpenAI said in Codex now offers pay-as-you-go pricing for teams that Business and Enterprise workspaces can buy Codex-only seats with no fixed seat fee, no rate limits, and billing based on token consumption.

The current Codex rate card describes the same shift in plainer terms: usage is priced directly on input, cached input, and output tokens rather than rough per-message estimates. The quota reset looks less like a random giveaway, more like a pressure release inside a system OpenAI is still rebalancing.

Users were already talking about throughput, lockouts, and long sessions

For a tool story, the most revealing community signal was not benchmark bragging. It was people talking about session length and interruption patterns.

Kolt Regaskes said Codex seemed more efficient than Claude Code and could work for hours without interruption, which is exactly the kind of usage pattern that makes a quota reset immediately noticeable. In the other direction, the OpenAI community thread on Codex rate limits was opened after moderators said they had received a significant number of reports that limits were too restrictive.

A few lighter reactions point in the same direction. One reposted comparison praised Codex for disagreeing with the user more often than Claude Code, while another reposted workflow list described doing coding, browser testing, and cleanup inside the Codex app itself.

The rough edges are still very shell-shaped

The last useful detail came from complaints about how Codex actually executes work. zeeg's WSL post said the product's Windows support still amounted to wrapping commands in wsl.exe, then argued that Codex leans too hard on shell interaction and inherits the usual escaping bugs.

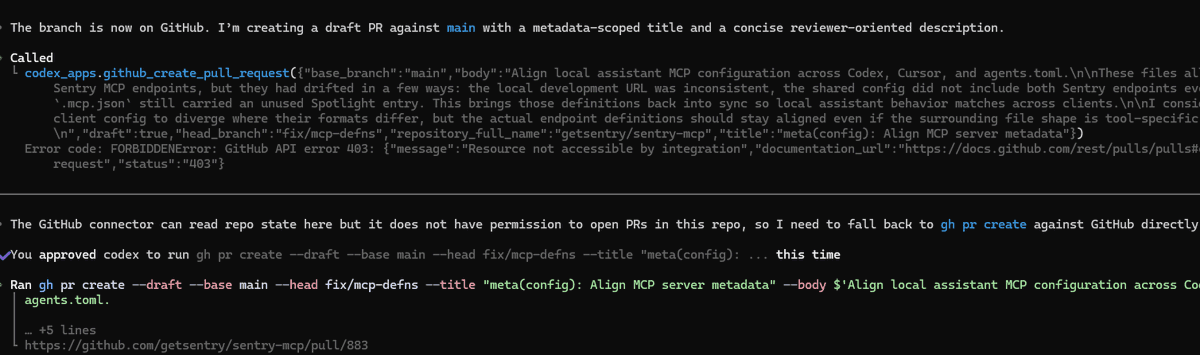

A screenshot from the same user showed another flavor of that problem. Codex tried a native GitHub connector call to create a draft PR, hit a 403 "Resource not accessible by integration" error, then fell back to a successful gh pr create command in the shell.

That is a pretty good snapshot of Codex right now: fast growth, looser quotas, a cleaned-up model roster, and a product surface that still sometimes escapes back into the terminal when the nicer path breaks.