OpenClaw 2026.4.15 adds Opus 4.7 support and bounded memory reads

OpenClaw 2026.4.15 adds Anthropic Opus 4.7, bundled Gemini TTS, bounded memory reads, and transport self-heal fixes. The release targets context and reliability issues users had been reporting this week.

TL;DR

- OpenClaw's release post says 2026.4.15 adds Anthropic Opus 4.7 defaults, bundled Gemini TTS, slimmer context handling, and bounded memory reads, while the official GitHub release expands that with a new model auth status surface.

- The release landed one day after steipete said the team had been shipping rapid hardening work that introduced occasional regressions, and hours after Matthew Berman described OpenClaw as "nearly unusable" for his setup.

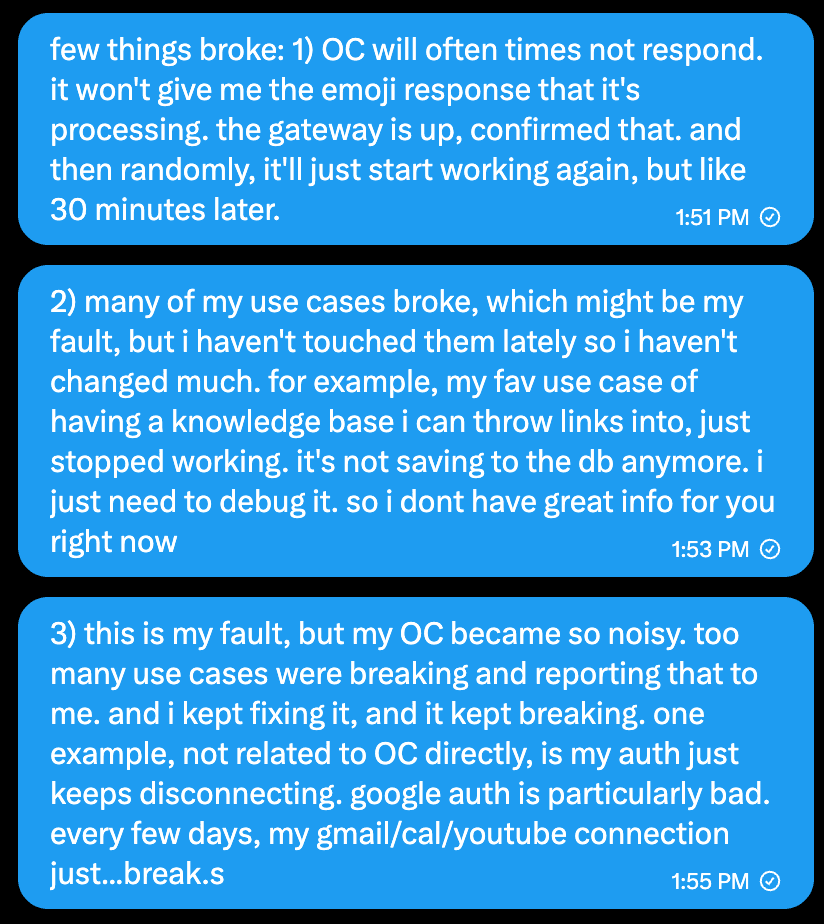

- In Berman's debugging notes, shown in , the failures included long stalls, a knowledge base that stopped saving to the database, and auth connections that kept breaking.

- The 2026.4.15 line also shipped quieter infrastructure fixes: the beta release notes added cloud-backed LanceDB memory indexes and stricter

memory_getlimits, while this auth-profiles fix describes a Windows bug that could leave the gateway unresponsive after successful model calls.

You can read the full release, skim the beta notes, and the reliability backdrop is unusually explicit: Berman posted screenshots of broken flows, vincent_koc replied that the project had been doing daily reliability releases, and an open GitHub issue logged an Anthropic socket leak severe enough to exhaust file descriptors after hours of normal agent turns.

What shipped in 2026.4.15

The release is mostly a plumbing pass, which is Christmas-come-early material for people running agents all day.

The concrete additions break down into four buckets:

- Model defaults: Anthropic selections,

opusaliases, Claude CLI defaults, and bundled image understanding now point at Opus 4.7, according to the official release. - Voice output: Gemini TTS was added to the bundled Google plugin, including voice selection plus WAV and PCM telephony output, according to the same release notes.

- Context and memory controls: the launch post foregrounded slimmer context and bounded memory reads, while the earlier beta release spells out stricter

memory_getbehavior and cloud storage support formemory-lancedb. - Operator visibility: the new model auth status card surfaces OAuth health and rate-limit pressure in the UI, with token-expiry warnings called out in both the beta and final release.

The reliability context was already public

Berman's follow-up put specifics behind the vague "everything is broken" post: delayed responses, knowledge-base writes no longer persisting, and Google auth disconnects every few days. vincent_koc answered that the project had been on daily reliability releases, which matches the commit tempo in the 2026.4.15 beta and final tags.

The repo shows the same pattern. This auth-profiles fix says a Windows read-only auth-profiles.json could cascade into "all models failed" behavior and make the gateway look dead even after a successful request. Separately, issue #67461 reports a streamed Anthropic API socket leak that reached 11,507 open connections in five hours, then broke every exec-style tool call with EBADF errors.

A quieter change: leaner local and remote memory plumbing

The beta notes include two changes that matter more than the tweet summary suggests. memory-lancedb can now store durable indexes on remote object storage, and OpenClaw added a GitHub Copilot embedding provider plus a reusable transport helper for memory search, both in the beta release notes.

The same release line also introduced an experimental local-model lean mode that drops browser, cron, and messaging tools by default so weaker local models can fit the runtime more cleanly, according to the full 2026.4.15 notes. That is a different kind of reliability fix: fewer moving parts before the model even starts.