Workshop launches local trace inspector with 1-line install and Codex-readable logs

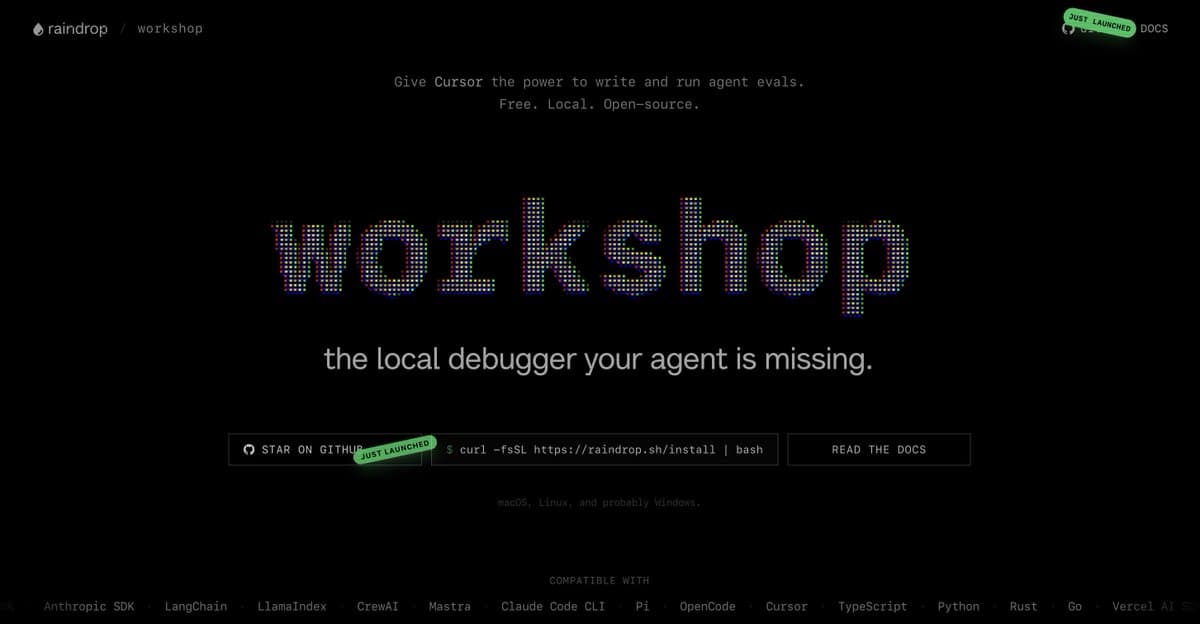

Workshop open-sourced a local agent debugging tool that exposes traces for humans, Codex, and Claude Code with a one-line install and GitHub repo. It turns agent runs into something teams can inspect and reuse for evals instead of treating terminal sessions as black boxes.

TL;DR

- Workshop says its new local trace inspector makes agent runs visible to both humans and coding agents, with traces that Codex and Claude Code can read for eval and testing loops, per benhylak's launch post.

- The project shipped as free open source with a one-line installer, a GitHub repo, and an install page, according to benhylak's links post.

- Early usage centered on Claude Code and Codex, with jxnlco's Codex test saying it worked inside the Codex desktop app browser, while benhylak's follow-up pitched tool-call level Claude Code traces.

- The pitch lands because local agent debugging is still messy: dexhorthy's shannon thread showed a separate proof of concept that drives Claude interactive through tmux and tails transcript JSONL files, and the TraceMind Reddit post describes the same monitoring gap from the eval side.

You can browse the repo, hit the install page, and watch benhylak's demo render a run as linked tool, LLM, and function calls. A second teaser, benhylak's drip post, shows a small CLI wrapper around the install flow. On the adjacent-hacks front, dexhorthy's shannon thread is worth a look for its tmux-plus-JSONL diagram, and the TraceMind Reddit post frames the same problem as quality monitoring instead of trace inspection.

Workshop

Workshop's core claim is simple: local agent runs should stop being black-box terminal sessions. The launch post says the tool exposes traces that people can inspect directly and that Codex or Claude Code can also consume, which turns the same run log into input for evals and automated testing.

The packaging is unusually lightweight for agent tooling. benhylak's links post points to the project site, the GitHub repo, and a one-line install flow.

A later post adds a small raindrop drip command that opens a picker after install. That makes the launch feel more like a local developer utility than a hosted observability product.

Codex and Claude Code

The most interesting detail in the launch thread is not the UI, it's the file format target. benhylak's launch post says Codex and Claude Code can read the traces too, and jxnlco's Codex test says he tried it inside the Codex desktop app's in-app browser.

That positions the tool as shared infrastructure between agent runners and agent debuggers. In replies, benhylak's integration reply says the team built bespoke integrations for "every single agent framework in the world," while benhylak's convention reply frames the broader bet as a standardized tracing convention.

The monitoring gap

Workshop is landing into a market that is still improvising. Dex Horthy's shannon repo, shown in dexhorthy's shannon thread, wraps Claude interactive in tmux and tails transcript JSONL files from ~/.claude/projects.

The diagram in that post breaks the workaround into three pieces:

- a CLI wrapper that accepts prompts and output modes

- a tmux session that feeds keys into Claude interactive

- transcript JSONL tailing for streamed state and logs

Horthy also says bidirectional stdio streaming is still non-trivial and labels the whole thing a proof of concept. That makes Workshop's cleaner packaging the real story here: several builders are clearly trying to turn agent execution into inspectable local artifacts, not just chat transcripts.

Voice agents and eval tooling

A few hours after launch, benhylak said someone had already built an adapter for voice agents. That is a small but useful signal that the trace model is not tied to terminal coding agents alone.

TraceMind – open source LLM quality monitoring with a ReAct agent that investigates why your AI started giving wrong answers

0 comments

The same day, a Reddit discussion about TraceMind described the adjacent failure mode from production monitoring: model quality can crater while HTTP status, latency, and error dashboards stay normal. Workshop is aimed at local trace inspection, not background scoring, but both projects are circling the same missing layer, visible evidence for what an agent actually did.