Dreamina Seedance 2.0 adds Omni Reference swaps in existing videos

Dreamina Seedance 2.0 creators showed Omni Reference swaps that replace characters, cars, and monsters inside existing footage while keeping reflections and motion aligned. Separate demos also chained six stills into one take, used start and end frames for transformations, and added voice-driven talking avatars.

TL;DR

- According to egeberkina's main demo, Dreamina Seedance 2.0 can swap a new subject into an existing shot with a single prompt, and her follow-up car test claims reflections and motion still stay aligned to the original footage.

- egeberkina's workflow post shows the current recipe: upload a reference video, upload a source image, switch on Omni Reference mode, then describe where the new element should sit in the scene.

- The official Dreamina Seedance 2.0 page says the model accepts images, video, audio, and text references, supports up to 12 inputs per project, and is built to preserve character consistency while learning camera moves and editing style.

- Beyond swaps, one six-image demo turned stills into a single moving shot, while a start and end frame example used the same system for outfit and character transformations.

- Seedance 2.0 creators are already pushing style transfer and shot design with it, from Midjourney-style Omni Reference tests to a full freefall prompt and a 15-second sci-fi action breakdown that spells out lenses, cuts, and timing beat by beat.

You can browse the official model page, check Dreamina's own first and last frame guide, and Dreamina already has a separate speaking avatar tool that lines up neatly with the voice-driven demo in the evidence. The weirdly useful part is how much of the workflow is now reference-based: existing footage for motion, still images for identity, shot lists for timing, and even style systems from elsewhere.

Omni Reference swaps

The main reveal is simple: Seedance 2.0 is being used as a replacement tool, not just a text-to-video toy. In egeberkina's examples, a dancer turns into a car and then a monster inside the same source footage, while her second test says the model kept scene reflections and movement behavior intact.

That lines up with the official Seedance 2.0 page, which says projects can combine image, video, audio, and text references, and that the system can replace or add video elements while maintaining character consistency. The posted workflow also makes clear that Omni Reference is the switch doing the work.

Prompt structure became shot design

Two creator posts show the same shift: prompts are starting to look like pre-production documents. MayorKingAI's breakdown splits a 15-second clip into setup, character definitions, five timed camera beats, and a style block with lens choices, color grade, smoke, sparks, and motion blur.

0xInk_'s freefall prompt does the same thing in a different register. It specifies camera behavior, wind physics, wardrobe motion, sound design, negative constraints, and the final close-up beat. The old one-line prompt is still around, but the better Seedance examples already read more like a shot list.

First and last frame transitions

Dreamina's own first and last frame guide describes the feature as a way to move from a chosen opening frame to a chosen ending frame with smooth transitions and stable visuals. That matches two separate creator tests in the evidence.

One demo chains six stills into a continuous take with no cuts or fades, using camera motion as the connective tissue. Another demo uses a defined start and end frame for transformations, which the author says works especially well for outfit changes and character morphs.

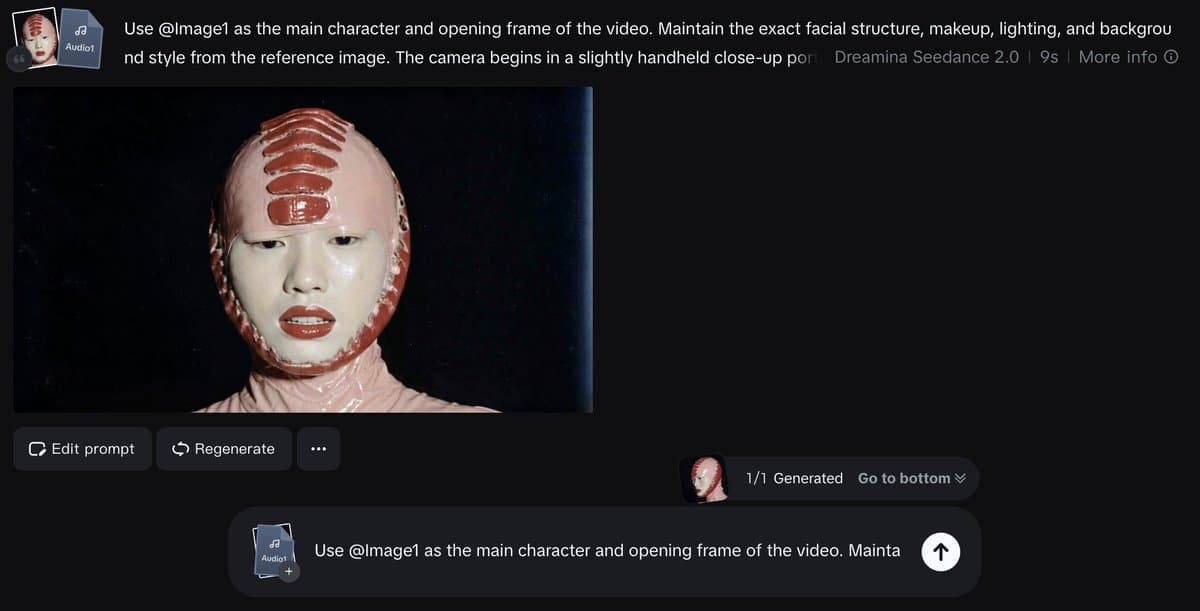

Talking avatars and style transfer

The last thread beat adds voice. In egeberkina's final demo, an uploaded image plus a voice file becomes a speaking character with lip sync and facial expression. Dreamina's own speaking avatar page describes the same basic pitch, turning a still image into a voiced character.

Style transfer is showing up at the same time. Artedeingenio says Midjourney styles carry into Seedance 2.0 through Omni Reference, while 0xInk_'s elevator fight and his follow-up stills push toward a PS1-style animation workflow built with Nano Banana and Seedance 2.0 together.