Runway

AI Image and Video Generator

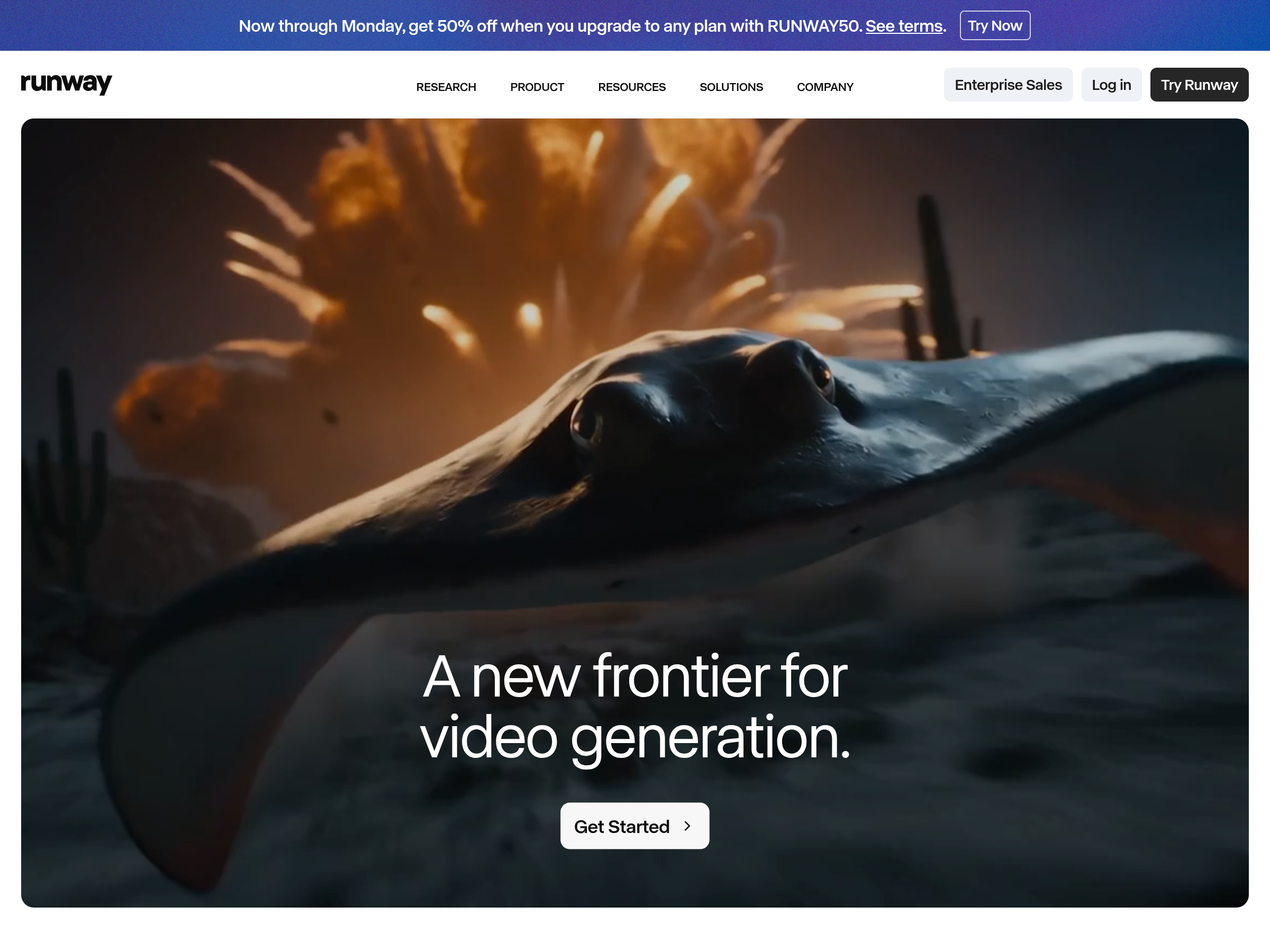

AI image and video generation and editing platform with access to image, video, audio, editing, and language models, plus apps and workflows for creative content generation.

Recent stories

Runway expanded Seedance 2.0 from Unlimited queues to every paid plan, and creator posts show new access on US accounts. Some users report human-face references now working there, while Weave tests and other creators still hit face blocks.

Freepik removed plan and region gates on Seedance 2.0, and Runway opened the model to all paid tiers. Posts about Higgsfield and MovieFlow also point to broader access and free trials, so creators can test availability across more platforms.

Creators showed Seedance 2.0 keeping the same voice across language and film-style changes, while others shared POV battle prompts, real-to-anime transitions, and rapid-cut sequences. These posts outline repeatable ways to control pacing, continuity, and reference-driven motion, so creators can borrow the workflows for short-form scenes.

Runway users report Seedance 2.0 now works on Unlimited plans with one-click upscale and node-based workflows. Early tests peg service limits at two concurrent jobs with 10–20 minute queues, so creators should watch throughput before relying on it for production.

Runway added prompt-generated custom voices for Characters in the web app and API. Creators can now define tone and persona from text instead of recording or cloning a source voice first, which should speed up voice setup.

Runway added Seedance 2.0 for text, image, video, and audio inputs on Unlimited and Enterprise tiers. CapCut also rolled Dreamina Seedance 2.0 into the U.S., but region gating, relaxed-style queueing, and face-reference issues still affect use.

Turkish creator Ozan Sihay released a seven-minute one-person AI short film built with Seedance 2.0, Kling 3.0, Nano Banana 2, Runway, HeyGen, Suno, and CapCut. The film matters because it turns Seedance’s weak face realism into a masked-character design rule and shows the planning graph behind the finished cut.

Runway released Ad Concepter on the web app to generate ad concepts from a prompt, reference image, and product shot, then tied it to a contest with up to $100K in prizes. The tool makes concept ideation more turnkey, but users still need paid-plan access and the official watermark.

New Multi-Shot demos showed Runway turning short prompts into 15-second dirt-bike chases, forest ambushes, and dialogue-led sequences. The examples make the web app easier to read as a prompt-to-scene tool, though evidence is still mostly creator-side tests.

Runway's new web app turns a prompt or starter image into a cut scene with dialogue, sound effects and shot pacing. Creators can now block whole sequences instead of stitching isolated clips.

Runway's Big Ad Contest is taking 30-to-60-second spots built around seven fictional briefs, with submissions closing April 1 and prizes up to $100,000. Treat it like a live client sprint and lock your concept fast if you already have a paid Runway plan.

Runway opened submissions for AI Festival 2026 across film, design, new media, fashion, advertising and gaming. The AI film circuit is formalizing fast, so submit early if you want a clearer path from experiment to festival recognition.