Hugging Face Hub launches Kernels with 1.7x-2.5x PyTorch speedups

Hugging Face introduced Kernels on the Hub to publish pre-compiled GPU kernels matched to GPU, PyTorch version, and OS. The packaging makes kernel optimizations shareable and claims 1.7x to 2.5x speedups over PyTorch baselines with torch.compile compatibility.

TL;DR

- Hugging Face launched Kernels on the Hub as a new way to publish and load pre-compiled GPU kernels, with matching for GPU, PyTorch build, and OS, instead of shipping them as ordinary Python packages.

- The core pitch in Clement Delangue's launch post is performance plus portability: kernel builds are meant to load outside

PYTHONPATH, multiple versions can coexist in one process, and the docs say they must work across recent Python versions and PyTorch CUDA and ABI combinations in the Kernel Hub docs. - Hugging Face is claiming 1.7x to 2.5x speedups over PyTorch baselines, while Merve Noyan's launch thread frames the product as a packaging and distribution layer for optimized kernels that developers can benchmark and share.

- The quickstart docs show the interface is basically one

get_kernel()call plus a version number, and the linked docs thread says those versions are designed so newer builds in the same major line do not break the API.

You can read the main docs, jump straight to the quickstart, and inspect the GitHub repository or the PyPI package. One useful pre-launch breadcrumb is the huggingface_hub v1.10.0 release, which added a new Kernel repo type five days before the public rollout. One weird detail, the launch thread's "discover all kernels here" link currently resolves to a user profile rather than a populated directory page.

Kernel Hub packaging

The new part here is not kernel optimization itself, it is Hugging Face turning kernels into first-class Hub artifacts. According to the overview docs, Hub kernels are defined around three constraints:

- Portable: they load from outside

PYTHONPATH - Unique: multiple versions of the same kernel can run in one Python process

- Compatible: they target recent Python versions, multiple PyTorch builds, CUDA variants, C++ ABIs, and older C library versions

That makes this look closer to model distribution infrastructure than to a normal extension package. Noyan's thread also ties the launch to a social workflow, benchmark gains, publish the artifact, then reuse it through the Hub.

get_kernel and version lines

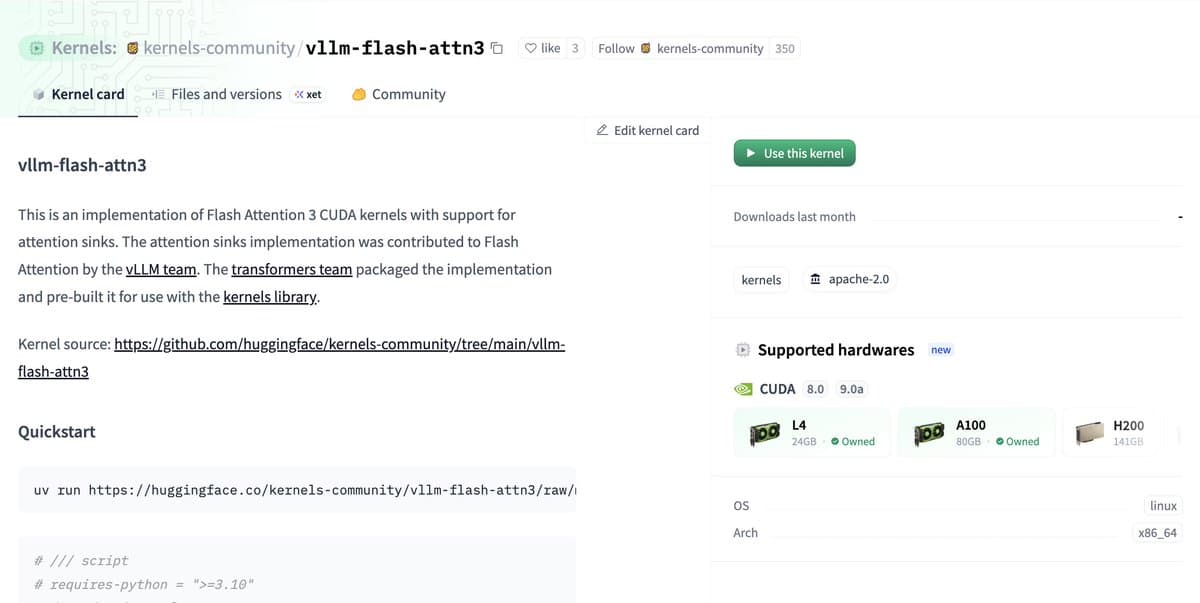

The API reference reduces loading to get_kernel(repo_id, version=...), which downloads a kernel into the local Hugging Face cache and loads it from there. The quickstart example uses get_kernel("kernels-community/activation", version=1) and then calls activation.gelu_fast(y, x) on a CUDA tensor.

The versioning model is more opinionated than the usual package pin. In the quickstart docs, version=1 maps to the v1 branch, and builds within that major line are not supposed to break the API or drop older PyTorch builds. That gives the Hub a stable compatibility lane while still letting maintainers publish newer compiled variants.

Build variants and compatibility targets

The kernel requirements page gets specific about how Hugging Face wants these repos structured. Each kernel repo needs a build/ directory, and each build variant is named from the runtime target, for example build/torch26-cxx98-cu118-x86_64-linux.

Inside those variant directories, the docs require:

- a Python-loadable kernel with

__init__.py - a compatibility layout for older

kernelspackage versions - builds that cover a wide spread of Linux systems and Torch configurations

This is the part most likely to matter to infra-minded readers. Hugging Face is standardizing the ugly matrix, Python version, Torch version, CUDA version, ABI, libc, into a repo format that can be fetched dynamically instead of rebuilt locally.

Kernel repo type and the first-day directory hiccup

A small but telling detail is that the platform plumbing landed before the announcement. The huggingface_hub v1.10.0 release was titled "Instant file copy and new Kernel repo type," which suggests Kernels required a new artifact class in the Hub itself, not just a docs page and Python package.

The public discovery layer still looks rough on day one. The launch thread points people to huggingface.co/kernels to browse available kernels, but that URL currently resolves to a user profile page in Exa's readout rather than a visible catalog. The underlying GitHub repo and PyPI package are already live, so the distribution stack seems further along than the storefront.