MiniMax releases M2.7 open model with 56.22% SWE-Pro and 57.0% Terminal Bench 2

MiniMax open-sourced M2.7 and published coding and agent benchmark claims including 56.22% SWE-Pro and 57.0% Terminal Bench 2. Day-zero support from SGLang, vLLM, Ollama Cloud, Together AI, and NVIDIA NIM makes it easy to try on common serving stacks.

TL;DR

- MiniMax's launch post says M2.7 is now open source, with headline claims of 56.22% on SWE-Pro and 57.0% on Terminal Bench 2, while the official blog post frames it as the first MiniMax model that "deeply participat[es] in its own evolution."

- According to LMSYS's SGLang announcement, M2.7 also posted a 66.6% medal rate on MLE Bench Lite and ships with native "Agent Teams," while Together's model thread adds 97% skill compliance across 40+ complex skills plus tool calling, thinking mode, and structured JSON output.

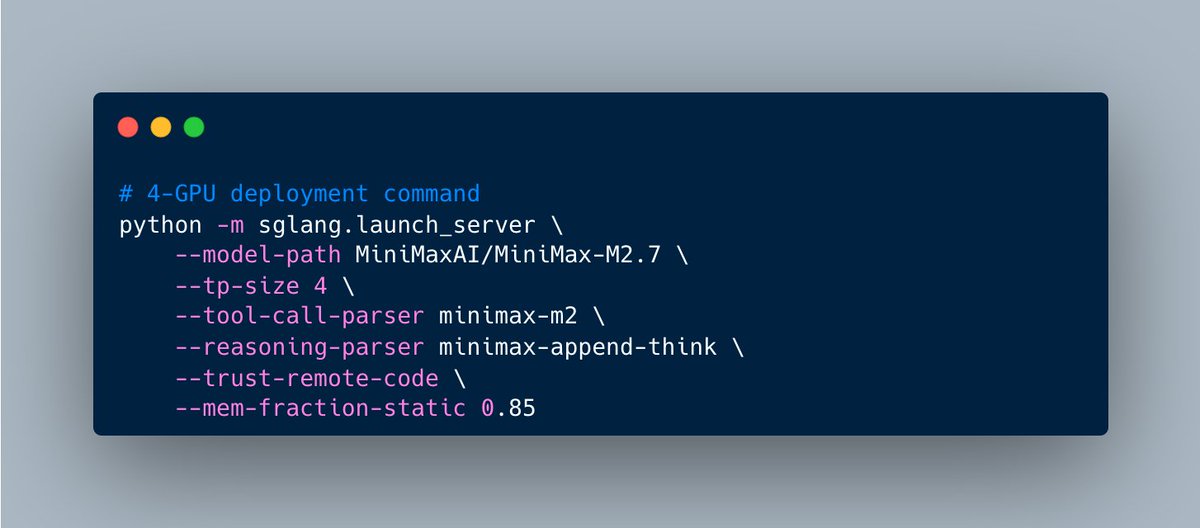

- vLLM's day-0 support post and MiniMax's SGLang follow-up show the model landed immediately on the two serving stacks most open-model users will try first, complete with MiniMax-specific tool and reasoning parsers.

- Ollama's launch post, MiniMax's NVIDIA NIM post, and Together's availability thread turned the release into a same-day distribution blitz across Ollama Cloud, NVIDIA endpoints, and Together.

You can read the model card on Hugging Face, browse the MiniMax launch writeup, pull up the SGLang cookbook, and even see NVIDIA publish a same-day architecture and kernel optimization post. Ollama had it on cloud with a single command on day one via its model page, and Together had a separate API page up with the full benchmark pitch.

Benchmarks

MiniMax pitched M2.7 as an engineering-first open model, not a generic chat release.

The Hugging Face model card and MiniMax's blog post line up on the same benchmark block:

- SWE-Pro: 56.22%

- Terminal Bench 2: 57.0%

- VIBE-Pro: 55.6%

- SWE Multilingual: 76.5

- Multi SWE Bench: 52.7

- NL2Repo: 39.8

- GDPval-AA: 1495 ELO

- MM Claw: 62.7%

- Toolathon: 46.3%

That mix is the interesting part. SWE-Pro and Terminal Bench 2 headline the launch, but MiniMax kept pairing them with office-task evals and harness-compliance metrics, which makes M2.7 look more like a model for long-running agents than a pure coding model.

Self-evolution loop

The strangest claim in the release is that M2.7 helped tune the system used to train and evaluate later versions of itself.

The MiniMax blog says an internal version of M2.7 updated its own memory, built RL skills, and iterated on its harness over 100-plus rounds. MiniMax describes a loop of analyzing failures, changing scaffold code, running evals, and then keeping or reverting the changes. It says that process improved an internal programming scaffold by 30%.

The same post gives a more concrete picture of the harness:

- persistent memory

- self-feedback after each round

- self-optimization based on prior rounds

- data pipelines and training environments

- cross-team collaboration support

- automatic log reading, debugging, metric analysis, code fixes, merge requests, and smoke tests

MiniMax also says the model handled 30% to 50% of an RL research workflow and reached a 66.6% medal rate across 22 MLE Bench Lite competitions. That is Christmas-come-early material for agent benchmark nerds, because the company is not just claiming better coding, it is claiming recursive harness improvement as a product capability.

Agent Teams and office work

A lot of the launch material spent as much time on collaboration and document work as on code.

MiniMax's own materials describe three layers that sit on top of the coding story:

- Agent Teams, for multi-agent collaboration with stable role identity and autonomous decisions

- complex Skills, with MiniMax claiming 97% adherence across 40-plus skills longer than 2,000 tokens each

- office editing, with repeated claims about Word, Excel, and PowerPoint generation plus multi-round high-fidelity edits

This is where the launch starts to differ from the usual open-weight coding model drop. The company kept tying software engineering, office editing, and multi-agent role stability into one harness story, instead of presenting them as separate feature buckets.

Serving stacks on day one

MiniMax did not leave the open release stranded on a single reference implementation.

By launch day, the model had:

- SGLang support, with

--tool-call-parser minimax-m2and--reasoning-parser minimax-append-thinkin the cookbook - vLLM support, with

--tool-call-parser minimax_m2,--reasoning-parser minimax_m2, and auto tool choice in vLLM's launch example - Ollama Cloud access, where Ollama's post says it is licensed for commercial usage and runnable with

ollama run minimax-m2.7:cloud - Together API availability, where Together's thread repeats the launch benchmarks and exposes the model through its hosted endpoint

- NVIDIA NIM hosting, where MiniMax's NVIDIA post says M2.7 works with NemoClaw and OpenClaw

The distribution story matters almost as much as the weights. Open models often spend days in packaging limbo. M2.7 hit the common inference and cloud surfaces immediately.

Architecture and kernels

NVIDIA's same-day technical post added the hard specs missing from most of the social launch thread.

According to NVIDIA's technical blog, M2.7 is a 230B-parameter sparse MoE model with 10B active parameters per token, a 4.3% activation rate, 62 layers, 256 local experts, and 8 experts activated per token. NVIDIA also lists a 200K input context window.

The same post says NVIDIA contributed two MiniMax-specific inference optimizations into open serving stacks:

- a fused QK RMSNorm kernel

- FP8 MoE support through TensorRT-LLM

NVIDIA claims those changes delivered up to 2.5x throughput improvement in vLLM and 2.7x in SGLang on Blackwell Ultra GPUs. That is a separate story from the model benchmarks, and it helps explain why MiniMax pushed so hard on day-one availability across SGLang, vLLM, and NIM in the first place.