Adobe Firefly previews Text to 3D at Runway AI Summit NYC

Summit attendees posted a preview of Firefly generating 3D objects from text, and creators also showed a Boards-based short-film pipeline built in Firefly. Try the workflow if you want one setup for asset generation, background removal, scene layout, and reference-driven animation.

TL;DR

- Adobe Firefly showed a text-to-3D preview at the Runway AI Summit in New York, at least according to attendee video from the floor, which shows prompts turning into rotatable objects like a sneaker, a planet, and a helmet attendee video.

- Separate creator demos the same day showed a more concrete Firefly workflow that already exists: generate characters and environments, remove backgrounds, assemble the scene in Firefly Boards, then use that layout as the brief for animation workflow thread process video.

- In that workflow, the key object is the Firefly Boards artboard, which Koldo describes as the step that makes the pipeline work because it locks characters, props, and environment into one composed frame before video generation artboard explanation.

- Adobe's own product pages already position Firefly as an all-in-one creative AI studio with Firefly Boards, partner models, video generation, and 3D-scene-based image reference tools, which makes the summit preview look like an extension of an existing creative stack rather than a one-off demo (Adobe blog, Boards docs).

Adobe had a keynote slot at the Runway AI Summit, and the most interesting Firefly material coming out of it was half preview, half workflow proof. You can watch the attendee clip of Text to 3D, read Adobe's own writeup on Firefly's expanding model stack, and cross-check the production side with the official docs for Firefly Boards and 3D scene reference tools.

Text to 3D

The summit-floor clip is short, but it is specific. Firefly appears to generate clean 3D objects directly from text, not just image variations, and the examples in the video look like asset candidates for product viz, motion design, or scene blocking.

Adobe has not published a matching launch post for text-to-3D yet. What it has published is a broader Firefly roadmap around image, video, partner models, and custom models, so for now the 3D tool reads as a preview spotted in public rather than a formally documented release (Adobe Firefly update).

Firefly Boards artboard pipeline

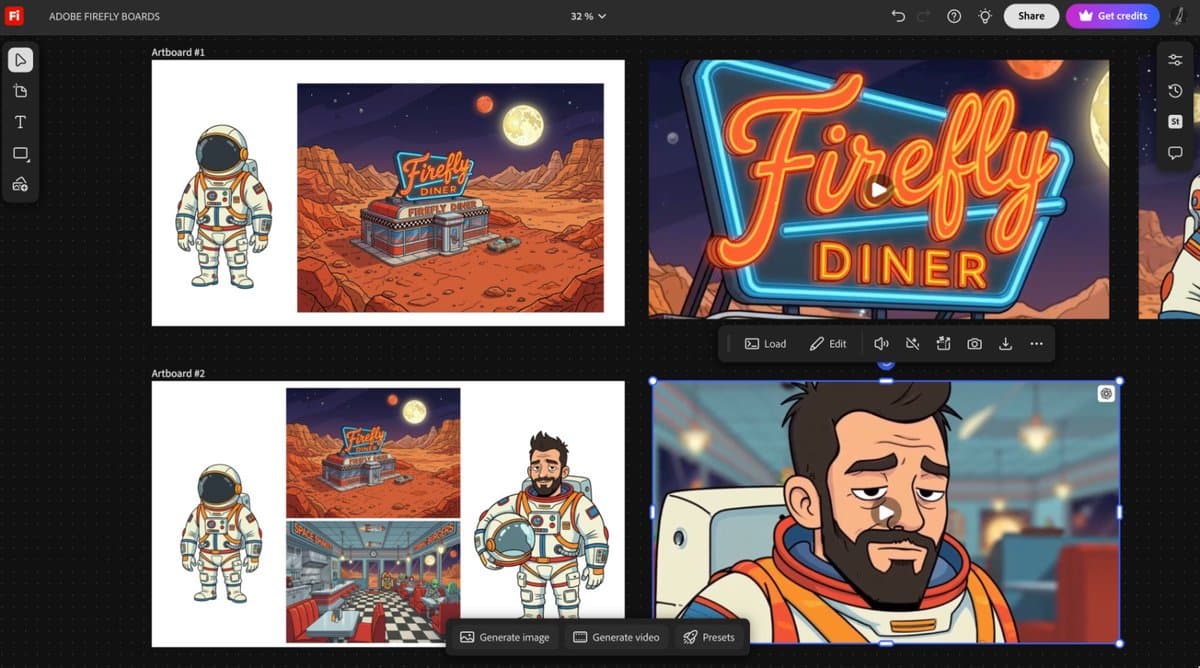

Koldo's thread is more useful than the teaser because it shows an end-to-end pipeline. Every asset in the short film, astronaut, alien waiter, diner exterior, diner interior, was generated in Firefly, cleaned up inside Firefly, then assembled in Firefly Boards before animation process breakdown.

The workflow breaks into four steps:

- Generate the characters and environments in Firefly.

- Remove backgrounds and isolate the usable assets.

- Place them together inside a Firefly Boards artboard.

- Use that composed board as the visual brief for video generation.

That last step matters because it turns a pile of separate generations into a single staged scene. The final clip keeps the astronaut, diner, and waiter coherent because the composition work happened before motion final animation.

What Adobe has officially shipped around it

Adobe's official materials already describe the surrounding pieces. Firefly Boards is documented as a mood-boarding and ideation surface inside Firefly, and the current Firefly help pages also list video generation, image generation from partner models, object composites, and 3D-scene-based reference workflows (About Firefly Boards, What's new in Firefly).

That makes the summit story pretty concrete even without an Adobe text-to-3D announcement. One part is already documented, Boards as the place where scenes get assembled. The other part is the fresh reveal, text-to-3D as a likely next input into the same stack, alongside the 3D scene reference tools Adobe already exposes for image generation (3D scene reference docs).