Cursor

The AI Code Editor

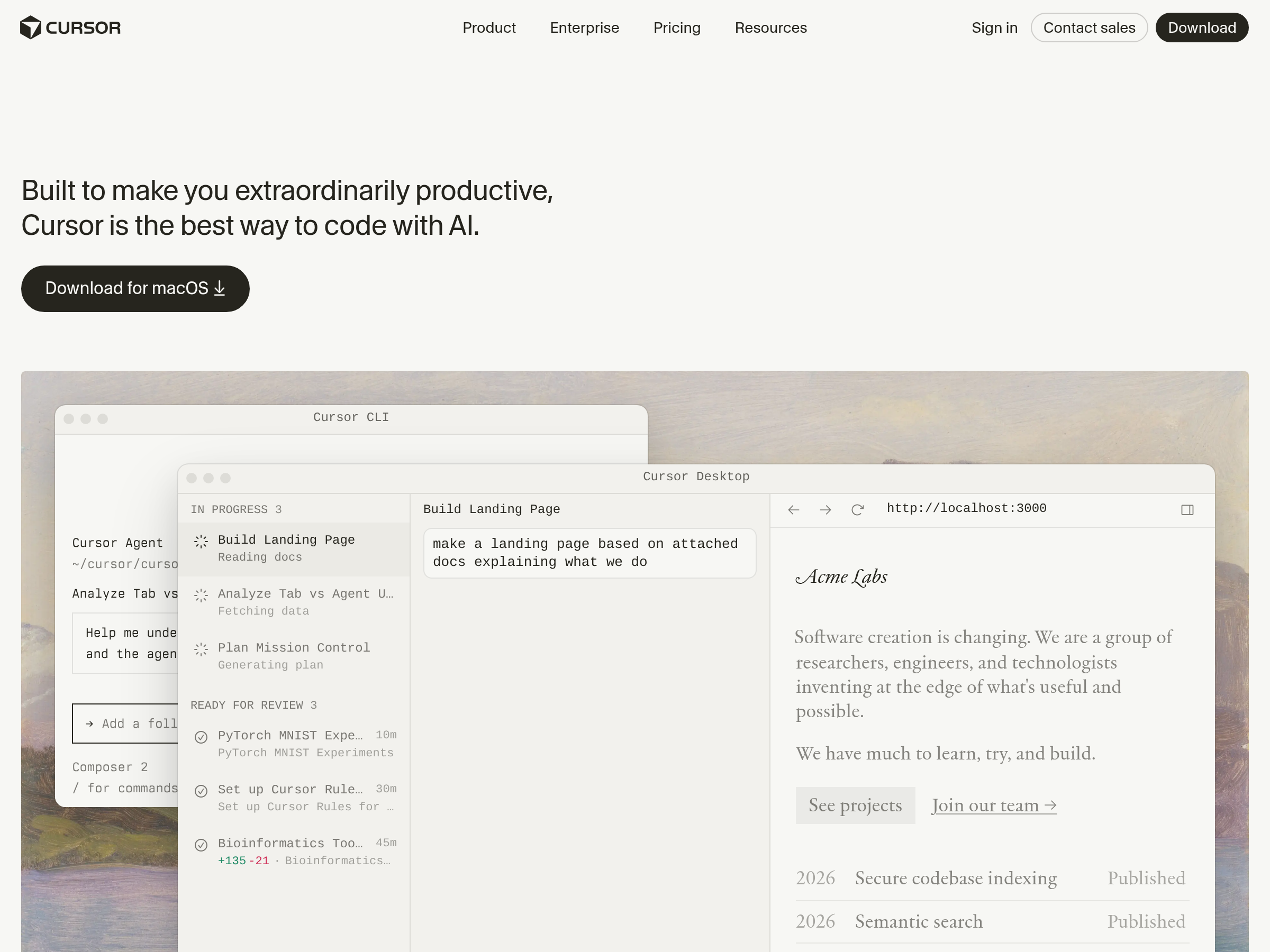

AI code editor for building software with chat, inline edits, and agentic coding workflows.

Recent stories

Anthropic rolled fast mode for Opus 4.7 into Claude Code and tools including Cursor, v0, Droid, Conductor, and OpenRouter. Use it where latency matters, but watch pricing: Cursor disclosed a 6x multiplier and others treat it as premium.

Artificial Analysis launched a Coding Agent Index for model-and-harness pairs, while OpenHands refreshed its model leaderboard. The results show harness choice matters, with cost varying over 30x and task time over 7x across stacks.

User posts and HN threads compared GPT-5.5 and Opus 4.7 across plan mode, frontend work, and 120K-context sessions. The split results mean token burn and instruction discipline matter as much as raw benchmark scores.

Raindrop launched Triage, a Slack-based agent that finds traces, summarizes recurring failures, runs recurring briefs, and opens experiments from production conversations. Teams using Claude Code, Cursor, or Devin can plug it into agent ops to shorten debugging loops.

Cursor added always-on agents that monitor GitHub, investigate failing runs, and open fix PRs automatically. That moves coding agents beyond the editor and into CI recovery after commits land.

Cursor's Team Kit packages internal skills like /verify-this, CLI and UI automation harnesses, PR cleanup, and /loop-on-ci, installable with /add-plugin cursor-team-kit. It turns several internal review and validation habits into reusable commands for agent-driven coding workflows.

Developers posted 11 early Cursor SDK integrations, including QA agents, Gmail-to-Chat handoffs, Chrome extensions, CI autofix, doc sync, and multi-repo orchestration. The demos show Cursor agents moving outside the IDE into existing team workflows with reusable cloud-agent patterns.

Cursor shipped a TypeScript SDK that exposes its runtime, harness, and models for CI/CD jobs, background automations, and embedded agents. The launch lets teams treat Cursor as programmable agent infrastructure, though it still depends on Cursor API access.

Independent tools and platforms shipped GPT-5.5 support within a day of the API rollout, spanning IDEs, hosted research agents, enterprise stacks, and coding agents. That shortens evaluation time because teams can test the model inside existing workflows instead of rebuilding around a single OpenAI surface.

Users and third-party evals reported shorter runs, stronger long-context scores, and faster rollout into Cursor and other tools a day after GPT-5.5 hit the API. Higher per-token pricing may be partly offset by lower loop time and fewer tool-call stalls, so watch early bench data before changing defaults.

Cursor 3.2 added /multitask async subagents, improved worktrees, and multi-root workspaces, then paired the release with GPT-5.5 rollout at 72.8% on CursorBench. The update makes background agent orchestration a first-class IDE workflow instead of a blocking queue.

Cursor 3 adds split-agent panes, tighter cloud-agent controls, voice input fixes, and an 87% reduction in dropped frames during large edits. The update makes the IDE easier to use as a mixed local-cloud agent workspace, while keeping editor navigation and diff review intact.

Cursor 3 introduced a separate agent-first workspace that can run agents locally, in worktrees, over SSH, and in the cloud while keeping the editor available. The release gives teams a path to multi-agent orchestration without giving up the traditional IDE surface.

OpenClaw 2026.3.28 exposes messaging and event handling as nine MCP tools, adds Responses API support, and lets plugins request permission during browser use. Use it to separate transport from agent logic so Claude Code, Codex, Cursor, and local harnesses can share the same account with less glue.

Expect wraps browser QA for Claude Code, Codex, or Cursor into a CLI that records bug videos and feeds failures back into a fix loop. It gives coding agents a tighter UI validation cycle without requiring a custom browser harness.

Cursor shipped Instant Grep, a local regex index built from n-grams, inverted indexes, and Bloom filters that drops large-repo searches from seconds to milliseconds. Faster candidate retrieval shortens the coding-agent loop, especially when ripgrep-style scans become the bottleneck.

Vercel's Next.js evals place Composer 2 second, ahead of Opus and Gemini despite the recent Kimi-base controversy. The result matters because it separates base-model branding from measured task performance on a real framework workflow.

Cursor and Kimi said Composer 2 starts from Kimi K2.5, with continued pretraining and RL added on top after developers spotted Kimi model IDs in traffic. Teams should benchmark it as a productized open-base stack, not a from-scratch model.

Cursor shipped Composer 2 with gains on CursorBench, Terminal-Bench 2.0, and SWE-bench Multilingual, plus a fast tier and an early Glass interface alpha. It resets the price-performance baseline for coding agents and shows Cursor is now a model company as much as an IDE.

NVIDIA introduced a coalition of labs and platform vendors to co-develop open frontier models, including Mistral, LangChain, Perplexity, Cursor, Reflection, Sarvam, and Black Forest Labs. Watch it if you want open-model efforts tied to DGX Cloud, NIM, and production tooling instead of weights alone.

Cursor published its internal benchmarking approach and reported wider separation between coding models than SWE-bench-style leaderboards show. Use it as a reference for production routing decisions, but validate results against your own online traffic and task mix.