OpenClaw

OpenClaw

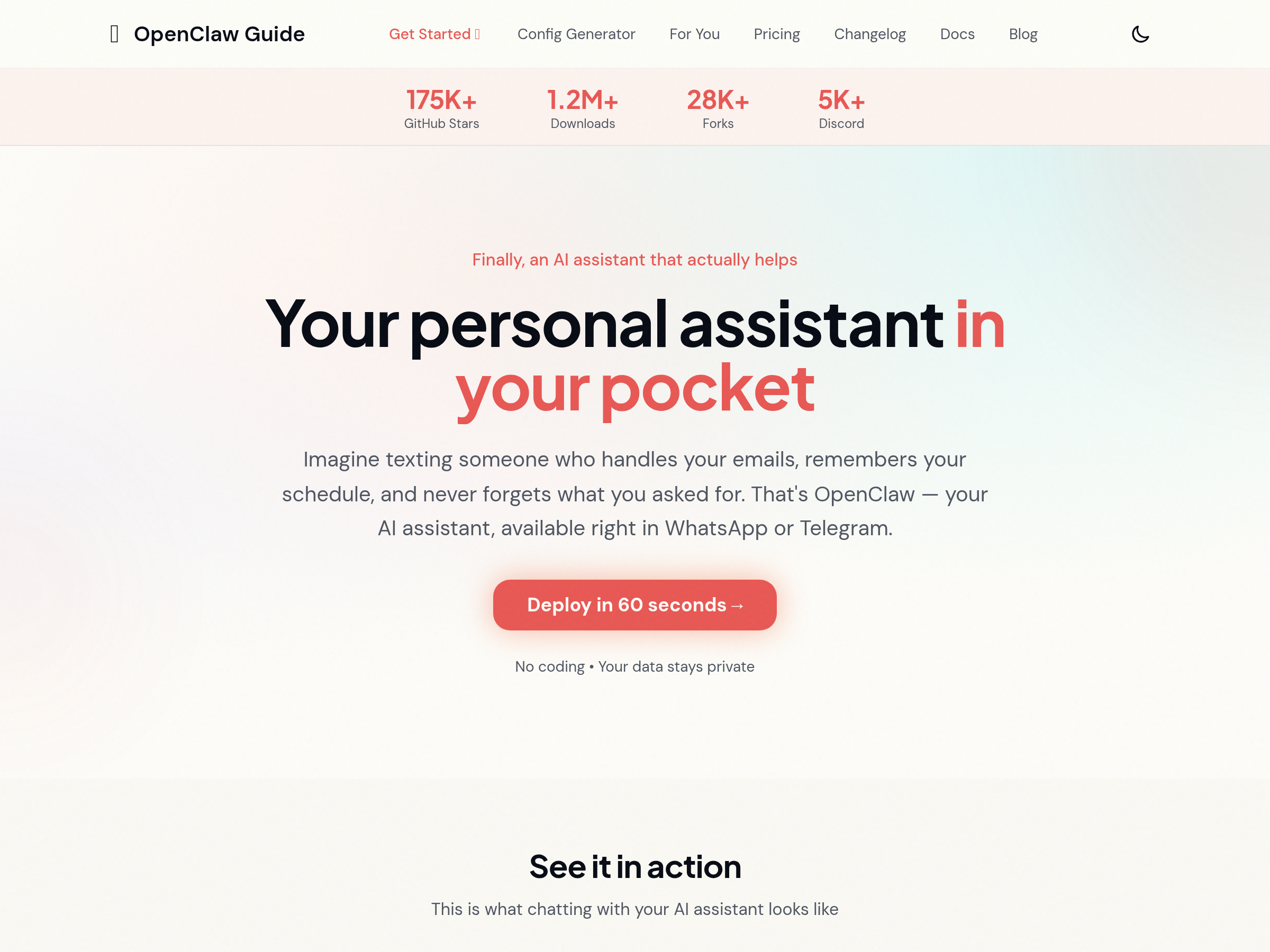

Software product associated with OpenClaw AI; exact product details could not be verified in this run.

Recent stories

Builders shipped pi-treebase, a Miko voice mode for pi-listens, devrage support, and a Japanese OpenCode Go guide after the first Pi extension burst. The releases arrive as Pi’s provider abstraction gets stress-tested by OpenClaw-scale multi-provider use.

Crabbox 0.11.0 shipped a Google Cloud provider, repo-local job workflows, AWS Windows WSL2 hydration, and a Blacksmith sync-stall guard. Recent Codex and OpenClaw posts show Crabbox already being used for reproducible bug repro and recorded QA before-and-after runs.

OpenClaw 2026.5.4 adds cleaner plugin installs, faster Gateway startup paths, sharper doctor repairs, and fixes for Windows and Discord. The release is aimed at reliability, so update if you rely on the Gateway or plugin tooling.

OpenClaw 2026.5.3 shipped paired-node file transfer, live /steer nudges, and /side side-questions, plus hardened plugin installs. The release changes how long-running agents are controlled and moves files with policy-gated 16 MB hops instead of stdout hacks.

ClawSweeper 0.2.0 turns OpenClaw repo maintenance into an issue-to-PR loop with build checks, repair passes, re-review, and conservative automerge. The release packages a Codex-driven maintenance bot that other repositories can fork instead of wiring their own triage stack.

OpenClaw 2026.5.2 shipped Grok 4.3 as the default xAI chat model and a broad plumbing pass for plugins, session paths, messaging bridges, and voice features. The release matters because it trims startup stalls and cleans up common integration edges in self-hosted agent setups.

OpenClaw now lets users sign in with a ChatGPT account and use an existing subscription inside the harness. That gives teams a sanctioned access path for third-party agent workflows just as Claude Code users report tighter billing and refusal heuristics elsewhere.

Days after Opus 4.7 launched, users reported commit-message triggers tied to OpenClaw or HERMES markers that could route requests into extra billing or refusals, alongside continued throttling complaints. Anthropic says affected users will get refunds, but repo-scanning heuristics may still affect cost and reliability in multi-harness workflows.

OpenClaw 2026.4.29 shipped a new group-chat flow, opt-in follow-up commitments, tighter exec controls, and first-class NVIDIA provider catalogs. The release matters because it pushes OpenClaw toward safer multi-user agent workflows instead of single-session chat hacks.

OpenClaw 2026.4.27 bundles DeepInfra support, better non-image attachments, explicit forward-proxy routing, and stricter model selection. The update broadens provider access while hardening operator-run deployments against routing and session failures.

Sigma added a private AI browser mode that runs OpenClaw with local models such as Gemma 4, Qwen, and Nemotron on-device. That matters because browser automation and page context can stay local instead of being routed through a hosted agent service.

OpenClaw 2026.4.26 shipped Google Live Talk, local-model fixes, openclaw migrate imports for Claude and Hermes, and one-command Matrix E2EE. It also hardens plugins, Docker, and transcript compaction for self-hosted agent runs.

Steipete’s maintainer bot ran 50 Codex agents in parallel and closed about 4,000 OpenClaw issues in a day. The cleanup pushed into rate limits, so use the README dashboard and Project Clowfish clustering to track large agent sweeps.

OpenClaw shipped a release that routes realtime voice queries to the full agent, defaults new users to V4 Flash, and adds coordinate clicks plus stale-lock recovery for browser automation. It also fixes Telegram, Slack, MCP session, and TTS issues, so update if those flows matter to your setup.

OpenClaw shipped a new release with a gateway-free local TUI, chat-time model registration, and xAI media tools. The update lowers setup friction and adds diagnostics plus trajectory bundles for debugging agent runs.

Infisical introduced Agent Vault, an open-source credential proxy that lets agents call APIs, CLIs, SDKs, and MCP servers without directly reading secrets. It matters because teams can keep policy and secret storage outside the agent runtime while still supporting on-prem and cloud deployments.

Fresh local reports put Qwen3.6-35B-A3B around 40 tok/s on M3 Ultra, extended testing to Strix Halo, and wired it into OpenClaw and Pi-style harnesses. The update matters because Qwen3.6 is moving from quant benchmarks into real local coding-agent loops with clearer hardware limits.

OpenClaw 2026.4.15 adds Anthropic Opus 4.7, bundled Gemini TTS, bounded memory reads, and transport self-heal fixes. The release targets context and reliability issues users had been reporting this week.

Hermes Agent shipped automatic OpenClaw migration, pastebin log sharing, and a reported 20% improvement in loading the right skill. Use the new import path and debug sharing to simplify setup across the official and community add-ons now covering support, web UI, workspace boards, and chat front ends.

Nous said Hermes became the top coding app on OpenRouter while shipping an OpenClaw migration patch, Telegram agent-to-agent messaging, and new memory controls. If you run long-lived agents, watch the migration path and memory settings before moving chats or skills hubs.

Kilo Code’s ClawShop recap bundled a 30-minute KiloClaw setup workshop, SecretRef credential handling, searchable ClawBytes guides, and PinchBench for agentic performance. The event, OpenClaw 2026.4.10, and PetClaw together added new security, memory, budgeting, and desktop layers around the OpenClaw stack.

OpenClaw 2026.4.7 adds a headless inference hub, memory-wiki, session branch and restore, and webhook-driven TaskFlows. Composio also shipped a CLI for secure app authentication, so users can expand OpenClaw from a local coding harness into a broader agent runtime.

Builders shipped a direct Claude Code harness and a ClawHub marketplace skill for OpenClaw workflows. Use these routes to wire agent tooling into OpenClaw, but watch Claude API limits and token burn costs.

Anthropic said Claude subscriptions will stop covering third-party harnesses such as OpenClaw on Apr. 4, with discounted extra-usage bundles, refunds, and one-time plan credits. Heavy Claude-based agent workflows may need to move to API billing or extra-usage bundles because Anthropic cites subscription capacity constraints.

OpenClaw 2026.3.28 exposes messaging and event handling as nine MCP tools, adds Responses API support, and lets plugins request permission during browser use. Use it to separate transport from agent logic so Claude Code, Codex, Cursor, and local harnesses can share the same account with less glue.

Stanford's `jai` package launches casual, strict, and bare Linux containment modes for AI agents, and users pair the idea with Claude Code and OpenClaw hardening tips. The workflow narrows write scope and reduces persistent exploit paths such as hooks, `.venv` files, and startup artifacts.

Every opened Plus One, a hosted OpenClaw that lives in Slack, comes preloaded with internal skills, and works with a ChatGPT subscription or other API keys. It lowers the ops burden for deployed coworkers, so teams can test packaged agents before building their own stack.

OpenClaw 2026.3.24 adds native Microsoft Teams, OpenWebUI sub-agent access, Slack reply buttons, and a control surface for skills and tools. The release expands where the runtime can plug into enterprise workflows, while also increasing the surface area teams need to secure.

OpenClaw shipped version 2026.3.22 with ClawHub, OpenShell plus SSH sandboxes, side-question flows, and more search and model options, then followed with a 2026.3.23 patch. Teams get a broader plugin surface, but should patch quickly and review plugin trust boundaries as the ecosystem grows.

Nous Research said Hermes Agent crossed 10,000 stars, while users reported easy migrations from OpenClaw and stable long-running use. If you test it, focus on persistent memory, MCP browser control, and delegation behavior under real workloads.

OpenClaw's maintainer asked users to switch to the dev channel and stress normal workflows before a large release that may break plugins. Watch harness speed, context plugins, and permission boundaries closely while the SDK refactor lands.

OpenClaw 3.13 now connects to a real Chrome 146 session over MCP so agents can drive your signed-in browser instead of a separate bot context. Update if captchas or auth state were blocking your web automation flows.

Ollama 0.18.1 added OpenClaw web search and fetch plugins plus non-interactive launch flows for CI, scripts, and container jobs. Pair it with Pi and Nemotron 3 Nano 4B if you want unattended agent jobs on constrained hardware.

Security coverage around OpenClaw intensified with a report on indirect prompt injection and data exfiltration risks, while KiloClaw published an independent assessment of its hosted isolation layers. Review your default configs and sandbox boundaries before exposing agents to untrusted web or tenant data.