Codex adds background computer use on macOS with 90+ plugins and SSH devboxes

OpenAI expanded Codex with background Mac computer use, an in-app browser, image generation, memory preview, automations, and 90+ plugins. The release moves Codex from terminal coding toward long-running UI and ops workflows, though some features remain macOS-first or alpha.

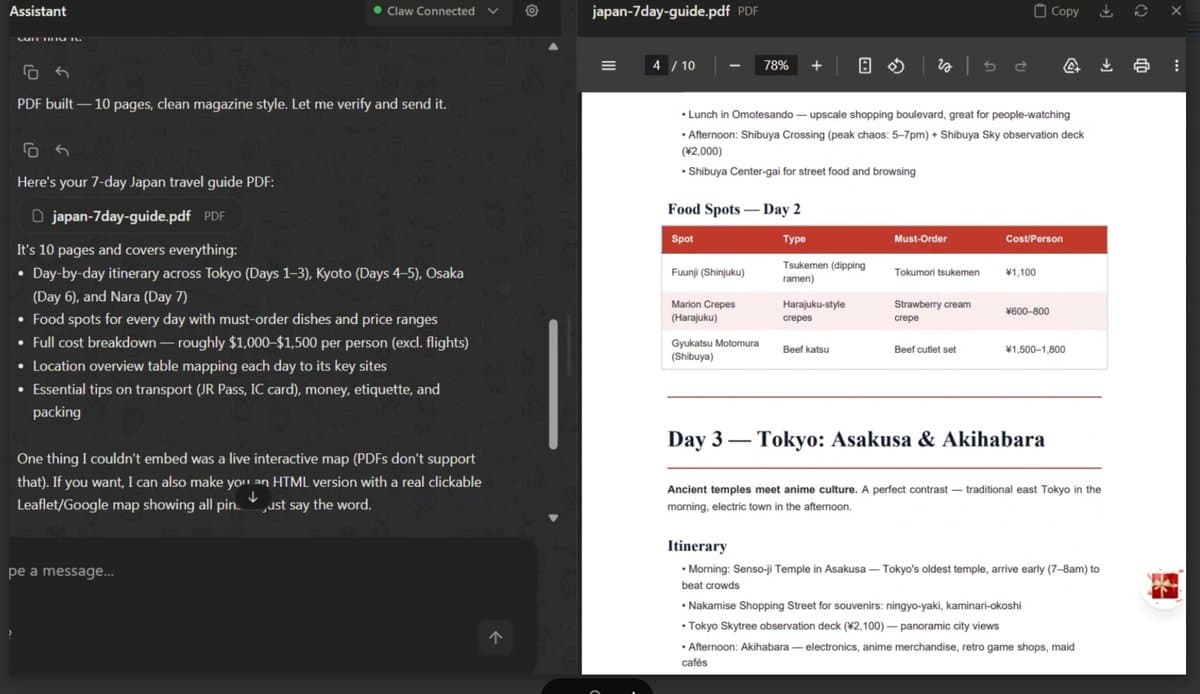

TL;DR

- OpenAI's launch thread says Codex can now use macOS apps with its own background cursor, while the official announcement says multiple agents can work in parallel without blocking your foreground work.

- According to OpenAI's feature thread, the update also adds 90-plus plugins, gpt-image-1.5 image generation, thread-aware automations, and rollout in the Codex desktop app starting now.

- OpenAIDevs' workflow thread adds the dev-specific pieces: GitHub review comment handling, an in-app browser for local web apps, and SSH access to remote devboxes in alpha.

- The official post says more than 3 million developers use Codex weekly, and kimmonismus' screenshot summary highlights OpenAI's framing that nearly half of usage is already non-coding.

- According to the main HN thread, early discussion centered less on launch hype than on practical questions: architecture quality, SSH workflows, and how background GUI automation works on macOS.

You can read the full launch post, skim the gpt-image-1.5 model docs, browse OpenAI's native development use cases, and check the Codex GitHub releases page. The HN thread is also unusually useful here, because the comments immediately jump to correctness failures, SSH workflows, and the OS-level mechanics behind background input.

Background computer use

The headline feature is simple: Codex now drives Mac apps by sight, click, and typing, but it does it with its own cursor in the background.

The official post says this is aimed at frontend iteration, app testing, and workflows that do not expose an API. Thibault Sottiaux added one useful technical hint, that the agent gets more than pure pixels, which lines up with why the demos look unusually fast and precise.

That background behavior is what people latched onto first. embirico called it "deep OS-level wizardry," while nummanali said early testing felt human-speed or faster. In the HN discussion, one commenter explicitly asked which macOS APIs let an app inject input without raising the target window, which is a good sign that engineers saw the implementation detail as the real novelty, not just another computer-use demo.

Browser, PR review, and remote devboxes

OpenAI bundled the GUI layer with a more conventional set of developer workflow upgrades.

The official post groups the new workflow surfaces into a tight list:

- In-app browser with direct page comments for frontend and game iteration

- GitHub review comments shown alongside diffs

- Multiple terminal tabs

- Remote devbox support over SSH, in alpha

- Rich previews for PDFs, spreadsheets, slides, and docs

- A summary pane for plans, sources, and artifacts

OpenAIDevs shows the two most concrete flows. One demo opens a local app in the browser, then comments directly on the rendered page. Another turns GitHub review comments into code changes before commit. The official post frames SSH more conservatively, as alpha remote connection for enterprise environments where files, credentials, and compute stay on the remote box.

Plugins and image generation

The second big expansion is surface area. Codex now reaches far beyond the repo.

The official post says the 90-plus additions mix skills, app integrations, and MCP servers. The named examples are a good map of intent:

- Atlassian Rovo for JIRA

- CircleCI for failing jobs and build inspection

- CodeRabbit for code review feedback

- GitLab Issues

- Microsoft Suite

- Neon by Databricks

- Remotion for prompt or code driven video work

- Render for deploy, debug, and monitoring

- Superpowers

OpenAIDevs' Remotion thread makes the plugin pitch less abstract by showing Codex reaching into creative tooling, not just CI and issue trackers. The image side is similarly pragmatic. OpenAI's thread says gpt-image-1.5 usage is included with a ChatGPT account inside Codex, and the model docs back the positioning around mockups, assets, and iterative design work in the same session.

Automations and memory

The longer-term bet is not computer control by itself. It is resumed work.

The official post says automations can now reuse existing conversation threads, preserve prior context, schedule future work, and wake automatically to continue tasks across days or weeks. It also introduces a memory preview that stores preferences, corrections, and hard-won context from previous tasks.

OpenAI's own examples are much broader than coding:

- Landing open pull requests

- Following up on tasks

- Tracking fast-moving conversations in Slack, Gmail, and Notion

- Suggesting where to start the work day

- Pulling open comments from Google Docs into a prioritized action list

Aaron Levie pushes the same idea into enterprise content workflows, while the HN thread shows the immediate counterweight: commenters describing cases where Codex passed tests but still chose the wrong architecture. The product story here is persistent agent context, but the community response is already about how much of that output still needs inspection.

CLI spillover, compaction, and platform notes

A few smaller details make this look less like a one-day app demo and more like a broader Codex platform update.

According to embirico's reply, computer use starts in the desktop app for permissions, then becomes available in the CLI too. Thibault Sottiaux also surfaced a new /compact command, and the GitHub releases page shows the adjacent plumbing landing around the same window: codex marketplace add, Ctrl+R reverse search, memory controls, and expanded MCP and plugin support.

Two rollout notes also matter. embirico said the updated Codex app now supports Intel Mac, and the official post says computer use is macOS-first, with EU, UK, Enterprise, and Edu availability for memory and personalization still coming soon.